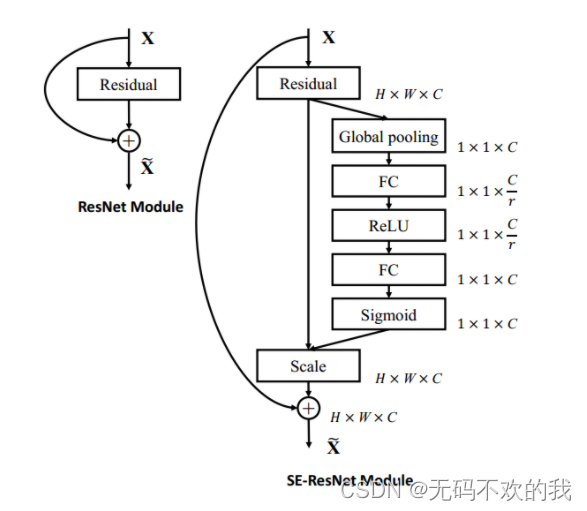

卷积就是在卷空间信息,可通道信息也大不相同,有的通道信息重要,而有点通道信息是无用的。

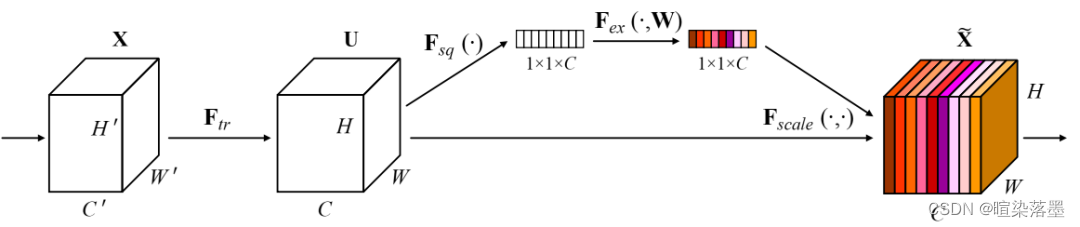

对特征图U的每个通道应用全局平均池化层(avg),可以得到该特征通道的常数标量。然后经过两个FC层得到C个权重系数,用此系数衡量特征图U的每个通道的重要程度,用该系数对特征图U进行加权。

Squeeze 就是avg操作,表征该特征通道的全局响应。

Excitation就是使用两个全连接层w参数进行不同通道之间的相关性学习。

sigmoid函数是输出为(0,1)的函数,最终输出需要概率值。

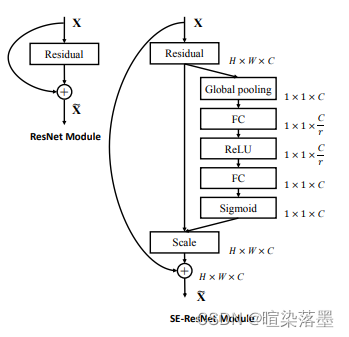

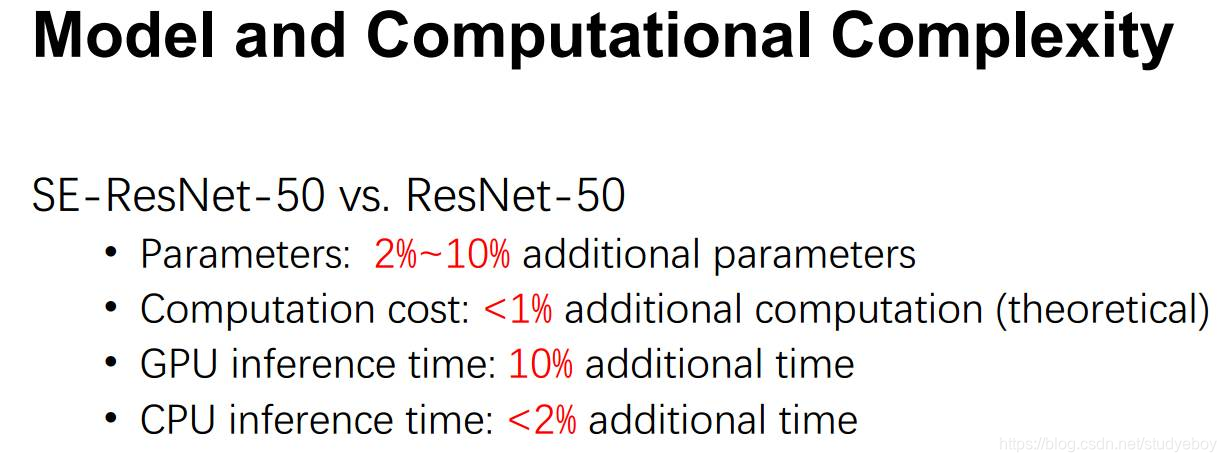

resnet中r值取16

class SELayer(nn.Module):def __init__(self, channel, reduction=16):super(SELayer, self).__init__()self.avg_pool = nn.AdaptiveAvgPool2d(1)self.fc = nn.Sequential(nn.Linear(channel, channel // reduction, bias=False),nn.ReLU(inplace=True),nn.Linear(channel // reduction, channel, bias=False),nn.Sigmoid())def forward(self, x):b, c, _, _ = x.size()y = self.avg_pool(x).view(b, c)y = self.fc(y).view(b, c, 1, 1)return x * y.expand_as(x)

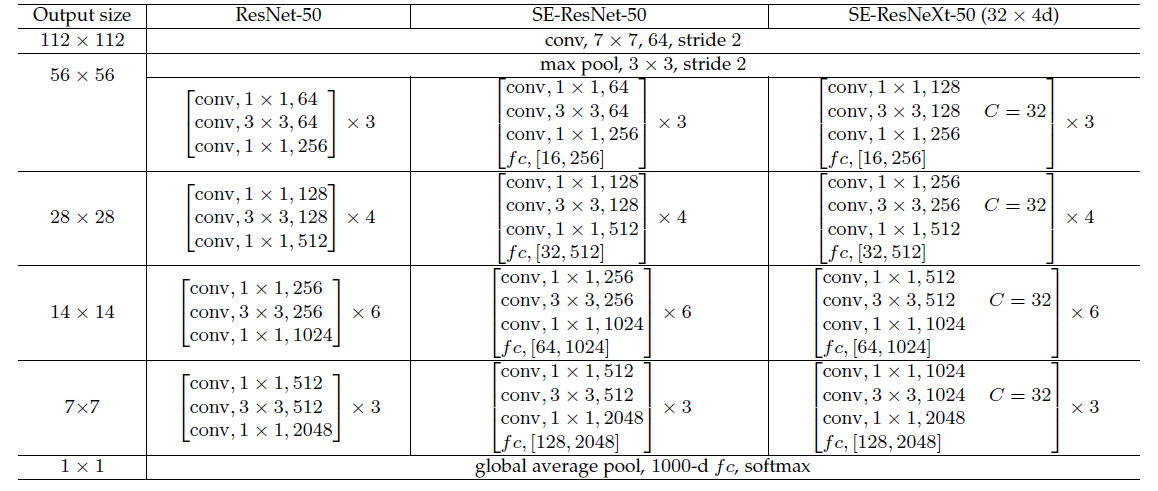

添加了senet的resnet网络

class SEBottleneck(nn.Module):expansion = 4def __init__(self, inplanes, planes, stride=1, downsample=None, reduction=16):super(SEBottleneck, self).__init__()self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)self.bn1 = nn.BatchNorm2d(planes)self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,padding=1, bias=False)self.bn2 = nn.BatchNorm2d(planes)self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)self.bn3 = nn.BatchNorm2d(planes * 4)self.relu = nn.ReLU(inplace=True)self.se = SELayer(planes * 4, reduction)self.downsample = downsampleself.stride = stridedef forward(self, x):residual = xout = self.conv1(x)out = self.bn1(out)out = self.relu(out)out = self.conv2(out)out = self.bn2(out)out = self.relu(out)out = self.conv3(out)out = self.bn3(out)out = self.se(out)if self.downsample is not None:residual = self.downsample(x)out += residualout = self.relu(out)return out

![[ 注意力机制 ] 经典网络模型1——SENet 详解与复现](https://img-blog.csdnimg.cn/76f92bb7921e4293800b75d7dcdde90f.png?x-oss-process=image/watermark,type_d3F5LXplbmhlaQ,shadow_50,text_Q1NETiBASG9yaXpvbiBNYXg=,size_14,color_FFFFFF,t_70,g_se,x_16#pic_center)