中文命名实体识别 BERT中文任务实战 18分钟快速实战_哔哩哔哩_bilibili

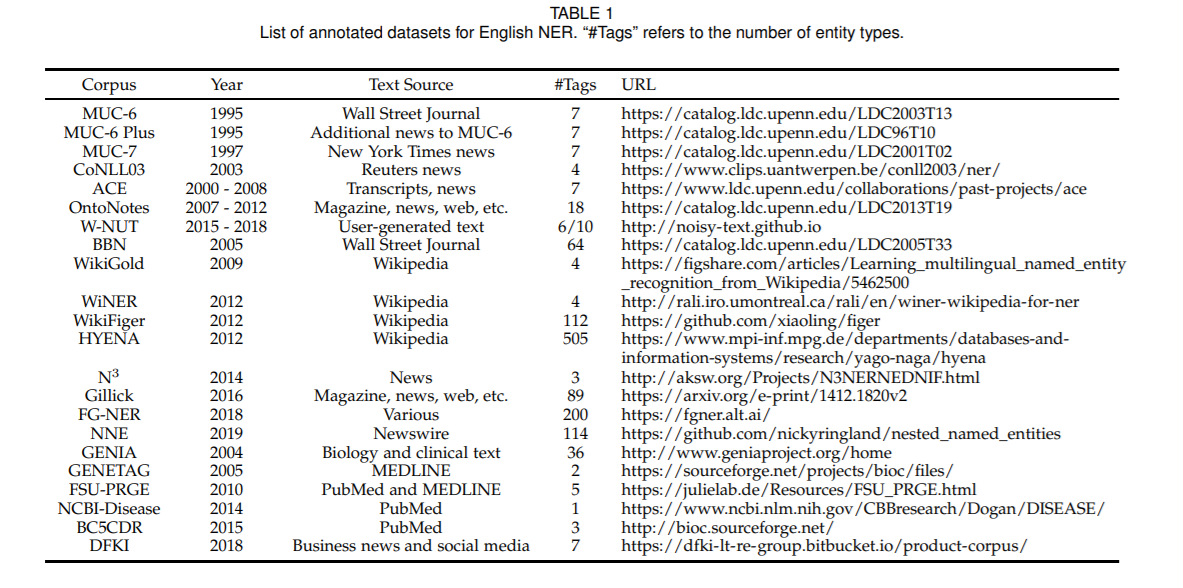

注意注释

from transformers import AutoTokenizer

import time # 时间start = time.time()

#加载分词器

tokenizer = AutoTokenizer.from_pretrained('hfl/rbt6') #中文bertprint(tokenizer)#分词测试,并没有输入数据集,这行是在jupyterbook上查看分词是否运行的

tokenizer.batch_encode_plus([['海', '钓', '比', '赛', '地', '点', '在', '厦', '门', '与', '金', '门', '之', '间','的', '海', '域', '。'],['这', '座', '依', '山', '傍', '水', '的', '博', '物', '馆', '由', '国', '内', '一','流', '的', '设', '计', '师', '主', '持', '设', '计', ',', '整', '个', '建', '筑','群', '精', '美', '而', '恢', '宏', '。']], #输入两个句子做测试truncation=True,padding=True,return_tensors='pt', #转为tensoris_split_into_words=True) #告诉编码器句子已经分词

数据集

import torch

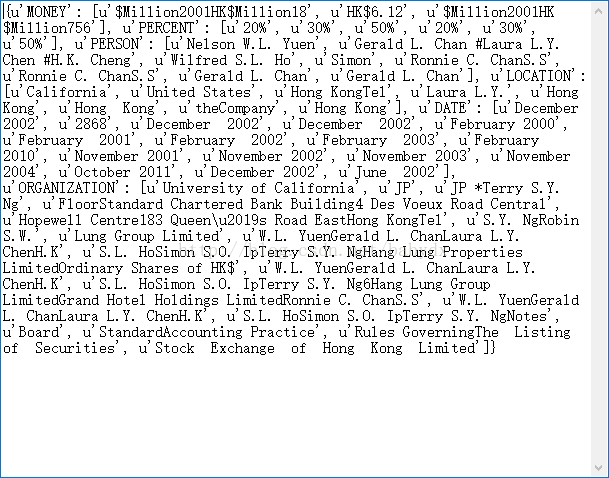

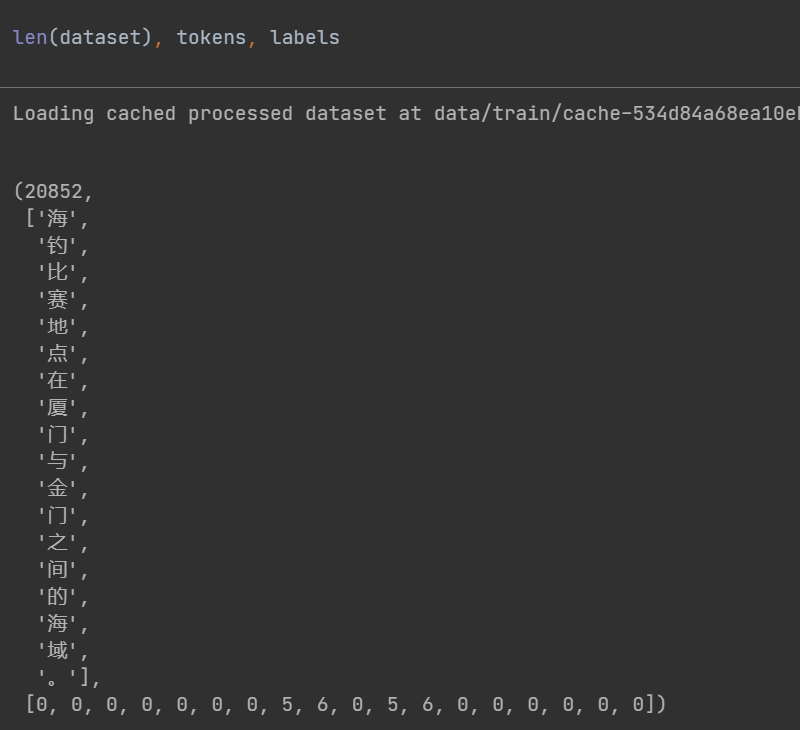

from datasets import load_dataset, load_from_diskclass Dataset(torch.utils.data.Dataset):def __init__(self, split):#names=['O', 'B-PER', 'I-PER', 'B-ORG', 'I-ORG', 'B-LOC', 'I-LOC'],用记事本打开文件看到的,如果文本中有个人名,则label为122,三个字的名字#在线加载数据集#dataset = load_dataset(path='peoples_daily_ner', split=split)#离线加载数据集dataset = load_from_disk(dataset_path='./data')[split]#过滤掉太长的句子def f(data):return len(data['tokens']) <= 512 - 2dataset = dataset.filter(f)self.dataset = datasetdef __len__(self):return len(self.dataset)def __getitem__(self, i):tokens = self.dataset[i]['tokens']labels = self.dataset[i]['ner_tags'] #这两个标签应该是命名实体数据集默认的,因为people数据集只有那几个实体标签,o,b,ireturn tokens, labelsdataset = Dataset('train')tokens, labels = dataset[0] #label是有实体就标注,比如5,6,无实体就0len(dataset), tokens, labels 分别对应形态

数据整理(注释)

#数据整理函数

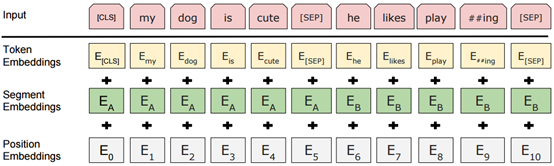

def collate_fn(data):tokens = [i[0] for i in data] #由batch_size决定labels = [i[1] for i in data] #i[1]是因为第一个label是clsinputs = tokenizer.batch_encode_plus(tokens,truncation=True,padding=True,return_tensors='pt',is_split_into_words=True) #对句子分词#print(tokenizer.decode(inputs['input_ids'][0]))#print(labels[0])#print(tokenizer.decode(inputs['input_ids'][0]))lens = inputs['input_ids'].shape[1] #找出这一批次句子中最长的那句,lens代表当前句子加上cls,pad,sep的词个数#print(lens)for i in range(len(labels)): #用7 代表补充的部分labels[i] = [7] + labels[i] #每个label的头部补充7,[7]是加在label[0]上,即cls变为7,【7, 0, 3, 4, 4, 4,。。。】#print('头',labels[i])labels[i] += [7] * lens #每个label的尾部补充lens个数的7#print('尾',labels[i])labels[i] = labels[i][:lens] #所有句子统一裁剪到最长句子的长度return inputs, torch.LongTensor(labels)labels = [i[1]:这里为i[1]是因为label的第一个是cls,可看下图

#查看数据样例

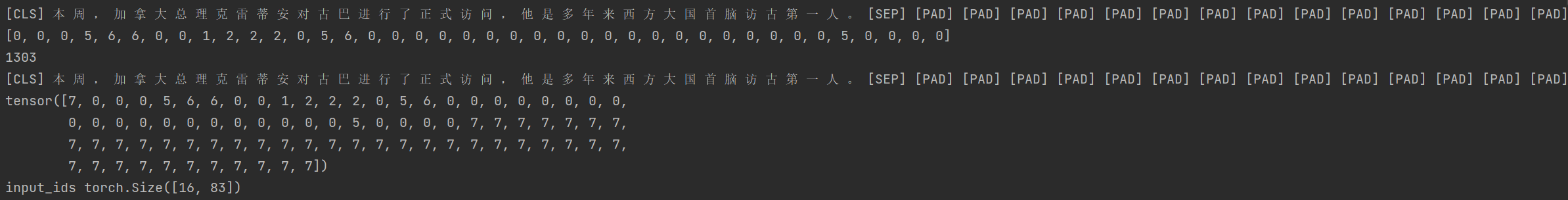

for i, (inputs, labels) in enumerate(loader):breakprint(len(loader))

print(tokenizer.decode(inputs['input_ids'][0]))

print(labels[0])for k, v in inputs.items(): #input_ids torch.Size([16, 95]),16是batch_size,95是不定的,是lensprint(k, v.shape)from transformers import AutoModel#加载预训练模型

pretrained = AutoModel.from_pretrained('hfl/rbt6')

pretrained = pretrained.cuda() #预训练也要在gpu上运行,重点

#统计参数量

print(sum(i.numel() for i in pretrained.parameters()) / 10000)#模型试算

#[b, lens] -> [b, lens, 768]

for k, v in inputs.data.items(): #这里因为inputs属于字典形式,data数据是有三个,都是tensor形式,被inputs包裹,所以需要分别放在gpu上inputs.data[k] = v.cuda()

pretrained(**inputs).last_hidden_state.shapepretrained = pretrained.cuda():预训练模型也放在CUDA上。

然后底下的inputs数据放cuda上,下面train()有解释

模型定义

【python】——Python中的*和**的作用和含义_Kadima°的博客-CSDN博客_python * **

理解‘*‘,‘*args‘,‘**‘,‘**kwargs‘_callinglove的博客-CSDN博客

#定义下游模型

class Model(torch.nn.Module):def __init__(self):super().__init__()self.tuneing = False #fineturn预设为0self.pretrained = None #默认情况下,预训练模型不属于下游任务的一部分#下游任务self.rnn = torch.nn.GRU(768, 768,batch_first=True)self.fc = torch.nn.Linear(768, 8)def forward(self, inputs):if self.tuneing: #如果tuning,则认为自己的预训练模型也要训练out = self.pretrained(**inputs).last_hidden_stateelse: #如果不tuning,则使用外部的预训练模型with torch.no_grad():out = pretrained(**inputs).last_hidden_stateout, _ = self.rnn(out) #这个,_可看lstm示例代码#print('前',out.shape)out = self.fc(out).softmax(dim=2) #前 torch.Size([128, 157, 768]),fc层降为8,所以dim=2#print(out.shape)return outdef fine_tuneing(self, tuneing):self.tuneing = tuneing #tuning模式下,预训练模型参数更新if tuneing:for i in pretrained.parameters():i.requires_grad = Truepretrained.train() #预训练模型训练模式self.pretrained = pretrained #预训练模型属于自己模型的一部分else:for i in pretrained.parameters():i.requires_grad_(False)pretrained.eval()self.pretrained = Nonemodel = Model()model(inputs).shape工具函数

#对计算结果和label变形,并且自注意力机制移除pad

def reshape_and_remove_pad(outs, labels, attention_mask):#变形,便于计算loss#[b, lens, 8] -> [b*lens, 8],把n句话合并在一起outs = outs.reshape(-1, 8)#[b, lens] -> [b*lens],n句话的label拼合在一起labels = labels.reshape(-1)#忽略对pad的计算结果,自注意力筛选#[b, lens] -> [b*lens - pad]select = attention_mask.reshape(-1) == 1outs = outs[select]labels = labels[select]return outs, labels#获取正确数量和总数

def get_correct_and_total_count(labels, outs):#[b*lens, 8] -> [b*lens]outs = outs.argmax(dim=1) #实体标签,筛选出概率最大的那个值correct = (outs == labels).sum().item()total = len(labels)#计算除了0以外元素的正确率,因为0太多了,包括的话,正确率很容易虚高select = labels != 0outs = outs[select]labels = labels[select]correct_content = (outs == labels).sum().item()total_content = len(labels)return correct, total, correct_content, total_contenttrain函数

from transformers import AdamW# setting device on GPU if available, else CPU,检查是否GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using device:', device)#Additional Info when using cuda

if device.type == 'cuda':print(torch.cuda.get_device_name(0))print('Memory Usage:')print('Allocated:', round(torch.cuda.memory_allocated(0) / 1024 ** 3, 1), 'GB')print('Cached: ', round(torch.cuda.memory_reserved(0) / 1024 ** 3, 1), 'GB')#训练

def train(epochs):lr = 2e-5 if model.tuneing else 5e-4 #这里model.tuneing的值由下面model.fine_tuneing(True)决定device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")#训练optimizer = AdamW(model.parameters(), lr=lr)criterion = torch.nn.CrossEntropyLoss()print('开始训练 ')model.train()for epoch in range(epochs):for step, (inputs, labels) in enumerate(loader):#模型计算s#[b, lens] -> [b, lens, 8]#inputs = inputs.to(device)for k, v in inputs.data.items(): #与上面一样inputs.data[k] = v.cuda()outs = model(inputs) #out没必要放在gpu中,labels和inputs需要labels = labels.to(device)#print('是否 ',outs.is_cuda, labels.is_cuda)#对outs和label变形,并且移除pad#outs -> [b, lens, 8] -> [c, 8]#labels -> [b, lens] -> [c]outs, labels = reshape_and_remove_pad(outs, labels,inputs['attention_mask'])#梯度下降loss = criterion(outs, labels).to(device)loss.backward()optimizer.step()optimizer.zero_grad()if step % 50 == 0:counts = get_correct_and_total_count(labels, outs)accuracy = counts[0] / counts[1]accuracy_content = counts[2] / counts[3]print(epoch, step, loss.item(), accuracy, accuracy_content)torch.save(model, 'model/命名实体识别_中文.model')这里头一段是检查是否能使用GPU,输出现在使用的显卡

for k, v in inputs.data.items(): inputs.data[k] = v.cuda()

因为要想使用GPU来训练,要把输入数据放在GPU上 ,但因为这里的inputs数据类型不是tensor,在前面**inputs时是dict字典形式,在inputs内部的数据是tensor形式,所以这里把inputs内部的数据通过循环都放在cuda上。

执行过程为通过 itmes() 方法将字典数据转化为 元组对 的形式,然后通过for 语句将 key 值赋给变量 k ,将 value 值赋给变量 v ,直至遍历结束

labels = labels.to(device) 标签也放在cuda上

至于outs其实不用

训练和测试

#model.fine_tuneing(False)

#train(500)

print('参数量 ',sum(p.numel() for p in model.parameters()) / 10000)

#train(1)model.fine_tuneing(True)

train(200)

print(sum(p.numel() for p in model.parameters()) / 10000)

#train(2)#测试

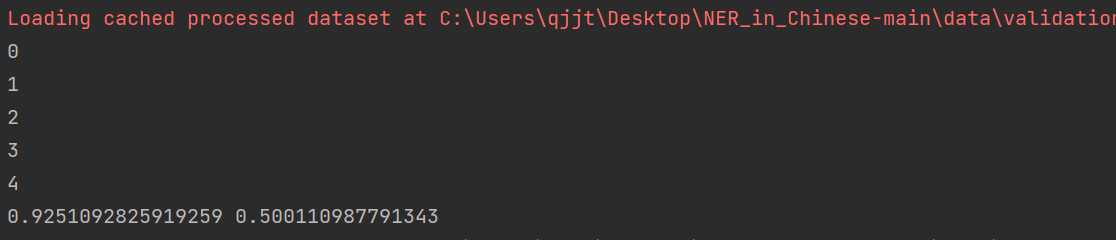

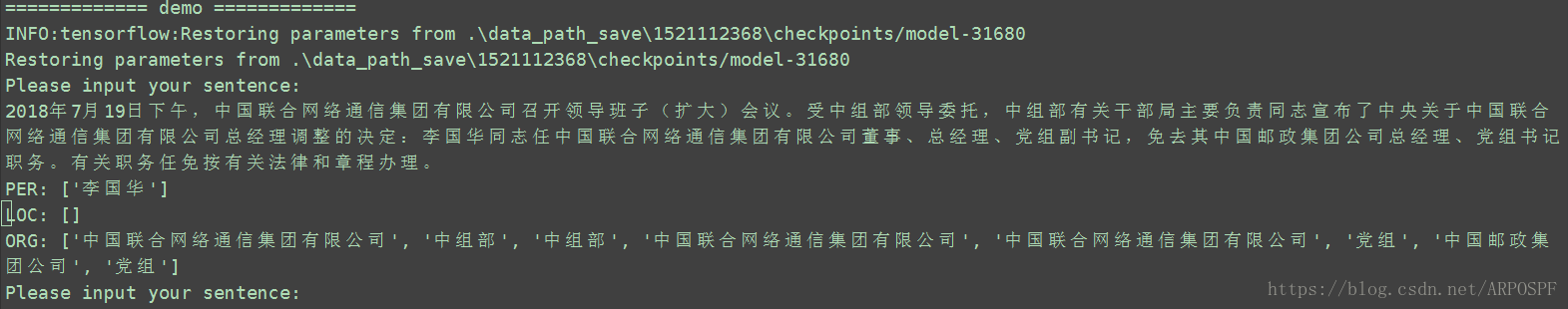

def test():model_load = torch.load('model/命名实体识别_中文.model')model_load.eval()loader_test = torch.utils.data.DataLoader(dataset=Dataset('validation'),batch_size=128,collate_fn=collate_fn,shuffle=True,drop_last=True)correct = 0total = 0correct_content = 0total_content = 0for step, (inputs, labels) in enumerate(loader_test):if step == 5:breakprint(step)with torch.no_grad():#[b, lens] -> [b, lens, 8] -> [b, lens]for k, v in inputs.data.items(): #与上面一样,都放进cudainputs.data[k] = v.cuda()labels = labels.cuda()outs = model_load(inputs)#对outs和label变形,并且移除pad#outs -> [b, lens, 8] -> [c, 8]#labels -> [b, lens] -> [c]outs, labels = reshape_and_remove_pad(outs, labels,inputs['attention_mask'])counts = get_correct_and_total_count(labels, outs)correct += counts[0]total += counts[1]correct_content += counts[2]total_content += counts[3]print(correct / total, correct_content / total_content)test()# 测试

def predict():model_load = torch.load('model/命名实体识别_中文.model')model_load.eval()loader_test = torch.utils.data.DataLoader(dataset=Dataset('validation'),batch_size=32,collate_fn=collate_fn,shuffle=True,drop_last=True)for i, (inputs, labels) in enumerate(loader_test):breakwith torch.no_grad():# [b, lens] -> [b, lens, 8] -> [b, lens]for k, v in inputs.data.items(): # 与上面一样,都放进cudainputs.data[k] = v.cuda()labels = labels.cuda()outs = model_load(inputs).argmax(dim=2)for i in range(32):# 移除padselect = inputs['attention_mask'][i] == 1input_id = inputs['input_ids'][i, select]out = outs[i, select]label = labels[i, select]# 输出原句子print(tokenizer.decode(input_id).replace(' ', ''))# 输出tagfor tag in [label, out]:s = ''for j in range(len(tag)):if tag[j] == 0:s += '·'continues += tokenizer.decode(input_id[j])s += str(tag[j].item())print(s)print('==========================')predict()

end=time.time()

print('Running time: %s Seconds'%(end-start))完整代码(使用GPU加速的bert命名实体识别),后面再加CRF

from transformers import AutoTokenizer

import time # 时间

import torch

from datasets import load_dataset, load_from_disk

start = time.time()

#加载分词器

tokenizer = AutoTokenizer.from_pretrained('hfl/rbt6') #中文bertprint(tokenizer)#分词测试,并没有输入数据集,这行是在jupyterbook上查看分词是否运行的

tokenizer.batch_encode_plus([['海', '钓', '比', '赛', '地', '点', '在', '厦', '门', '与', '金', '门', '之', '间','的', '海', '域', '。'],['这', '座', '依', '山', '傍', '水', '的', '博', '物', '馆', '由', '国', '内', '一','流', '的', '设', '计', '师', '主', '持', '设', '计', ',', '整', '个', '建', '筑','群', '精', '美', '而', '恢', '宏', '。']], #输入两个句子做测试truncation=True,padding=True,return_tensors='pt', #转为tensoris_split_into_words=True) #告诉编码器句子已经分词class Dataset(torch.utils.data.Dataset):def __init__(self, split):#names=['O', 'B-PER', 'I-PER', 'B-ORG', 'I-ORG', 'B-LOC', 'I-LOC'],用记事本打开文件看到的,如果文本中有个人名,则label为122,三个字的名字#在线加载数据集#dataset = load_dataset(path='peoples_daily_ner', split=split)#离线加载数据集dataset = load_from_disk(dataset_path='./data')[split]#过滤掉太长的句子def f(data):return len(data['tokens']) <= 512 - 2dataset = dataset.filter(f)self.dataset = datasetdef __len__(self):return len(self.dataset)def __getitem__(self, i):tokens = self.dataset[i]['tokens']labels = self.dataset[i]['ner_tags'] #这两个标签应该是命名实体数据集默认的,因为people数据集只有那几个实体标签,o,b,ireturn tokens, labelsdataset = Dataset('train')tokens, labels = dataset[0] #label是有实体就标注,比如5,6,无实体就0len(dataset), tokens, labels#数据整理函数

def collate_fn(data):tokens = [i[0] for i in data] #由batch_size决定labels = [i[1] for i in data] #i[1]是因为第一个label是clsinputs = tokenizer.batch_encode_plus(tokens,truncation=True,padding=True,return_tensors='pt',is_split_into_words=True) #对句子分词#print(tokenizer.decode(inputs['input_ids'][0]))#print(labels[0])#print(tokenizer.decode(inputs['input_ids'][0]))lens = inputs['input_ids'].shape[1] #找出这一批次句子中最长的那句,lens代表当前句子加上cls,pad,sep的词个数#print(lens)for i in range(len(labels)): #用7 代表补充的部分labels[i] = [7] + labels[i] #每个label的头部补充7,[7]是加在label[0]上,即cls变为7,【7, 0, 3, 4, 4, 4,。。。】#print('头',labels[i])labels[i] += [7] * lens #每个label的尾部补充lens个数的7#print('尾',labels[i])labels[i] = labels[i][:lens] #所有句子统一裁剪到最长句子的长度return inputs, torch.LongTensor(labels)#数据加载器

loader = torch.utils.data.DataLoader(dataset=dataset,batch_size=8, #batch_size如果是16,则在tuning=true时gpu显存不足collate_fn=collate_fn,shuffle=True,drop_last=True)#查看数据样例

for i, (inputs, labels) in enumerate(loader):breakprint(len(loader))

print(tokenizer.decode(inputs['input_ids'][0]))

print(labels[0])for k, v in inputs.items(): #input_ids torch.Size([16, 95]),16是batch_size,95是不定的,是lensprint(k, v.shape)from transformers import AutoModel#加载预训练模型

pretrained = AutoModel.from_pretrained('hfl/rbt6')

pretrained = pretrained.cuda() #预训练也要在gpu上运行,重点

#统计参数量

print(sum(i.numel() for i in pretrained.parameters()) / 10000)#模型试算

#[b, lens] -> [b, lens, 768]

for k, v in inputs.data.items(): #这里因为inputs属于字典形式,data数据是有三个,都是tensor形式,被inputs包裹,所以需要分别放在gpu上inputs.data[k] = v.cuda()

pretrained(**inputs).last_hidden_state.shape#定义下游模型

class Model(torch.nn.Module):def __init__(self):super().__init__()self.tuneing = False #fineturn预设为0self.pretrained = None #默认情况下,预训练模型不属于下游任务的一部分#下游任务self.rnn = torch.nn.GRU(768, 768,batch_first=True)self.fc = torch.nn.Linear(768, 8)def forward(self, inputs):if self.tuneing: #如果tuning,则认为自己的预训练模型也要训练out = self.pretrained(**inputs).last_hidden_stateelse: #如果不tuning,则使用外部的预训练模型with torch.no_grad():out = pretrained(**inputs).last_hidden_state #inputs是字典形式,dictout, _ = self.rnn(out) #这个,_可看lstm示例代码#print('前',out.shape)out = self.fc(out).softmax(dim=2) #前 torch.Size([128, 157, 768]),fc层降为8,所以dim=2#print(out.shape)return outdef fine_tuneing(self, tuneing):self.tuneing = tuneing #tuning模式下,预训练模型参数更新if tuneing:for i in pretrained.parameters():i.requires_grad = Truepretrained.train() #预训练模型训练模式self.pretrained = pretrained #预训练模型属于自己模型的一部分else:for i in pretrained.parameters():i.requires_grad_(False)pretrained.eval()self.pretrained = Nonemodel = Model().cuda()#对计算结果和label变形,并且自注意力机制移除pad

def reshape_and_remove_pad(outs, labels, attention_mask):#变形,便于计算loss#[b, lens, 8] -> [b*lens, 8],把n句话合并在一起outs = outs.reshape(-1, 8)#[b, lens] -> [b*lens],n句话的label拼合在一起labels = labels.reshape(-1)#忽略对pad的计算结果,自注意力筛选#[b, lens] -> [b*lens - pad]select = attention_mask.reshape(-1) == 1outs = outs[select]labels = labels[select]return outs, labels#获取正确数量和总数

def get_correct_and_total_count(labels, outs):#[b*lens, 8] -> [b*lens]outs = outs.argmax(dim=1) #实体标签,筛选出概率最大的那个值correct = (outs == labels).sum().item()total = len(labels)#计算除了0以外元素的正确率,因为0太多了,包括的话,正确率很容易虚高select = labels != 0outs = outs[select]labels = labels[select]correct_content = (outs == labels).sum().item()total_content = len(labels)return correct, total, correct_content, total_contentfrom transformers import AdamW# setting device on GPU if available, else CPU,检查是否GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using device:', device)#Additional Info when using cuda

if device.type == 'cuda':print(torch.cuda.get_device_name(0))print('Memory Usage:')print('Allocated:', round(torch.cuda.memory_allocated(0) / 1024 ** 3, 1), 'GB')print('Cached: ', round(torch.cuda.memory_reserved(0) / 1024 ** 3, 1), 'GB')#训练

def train(epochs):lr = 2e-5 if model.tuneing else 5e-4 #这里model.tuneing的值由下面model.fine_tuneing(True)决定device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")#训练optimizer = AdamW(model.parameters(), lr=lr)criterion = torch.nn.CrossEntropyLoss()print('开始训练 ')model.train()for epoch in range(epochs):for step, (inputs, labels) in enumerate(loader):#模型计算s#[b, lens] -> [b, lens, 8]#inputs = inputs.to(device)for k, v in inputs.data.items(): #与上面一样inputs.data[k] = v.cuda()outs = model(inputs) #out没必要放在gpu中,labels和inputs需要labels = labels.to(device)#print('是否 ',outs.is_cuda, labels.is_cuda)#对outs和label变形,并且移除pad#outs -> [b, lens, 8] -> [c, 8]#labels -> [b, lens] -> [c]outs, labels = reshape_and_remove_pad(outs, labels,inputs['attention_mask'])#梯度下降loss = criterion(outs, labels).to(device)loss.backward()optimizer.step()optimizer.zero_grad()if step % 50 == 0:counts = get_correct_and_total_count(labels, outs)accuracy = counts[0] / counts[1]accuracy_content = counts[2] / counts[3]print(epoch, step, loss.item(), accuracy, accuracy_content)torch.save(model, 'model/命名实体识别_中文.model')#model.fine_tuneing(False)

#train(500)

print('参数量 ',sum(p.numel() for p in model.parameters()) / 10000)

#train(1)model.fine_tuneing(True)

train(200)

print(sum(p.numel() for p in model.parameters()) / 10000)

#train(2)#测试

def test():model_load = torch.load('model/命名实体识别_中文.model')model_load.eval()loader_test = torch.utils.data.DataLoader(dataset=Dataset('validation'),batch_size=128,collate_fn=collate_fn,shuffle=True,drop_last=True)correct = 0total = 0correct_content = 0total_content = 0for step, (inputs, labels) in enumerate(loader_test):if step == 5:breakprint(step)with torch.no_grad():#[b, lens] -> [b, lens, 8] -> [b, lens]for k, v in inputs.data.items(): #与上面一样,都放进cudainputs.data[k] = v.cuda()

改成GPU版本后训练效果不太好: