唐宇迪视频学习笔记

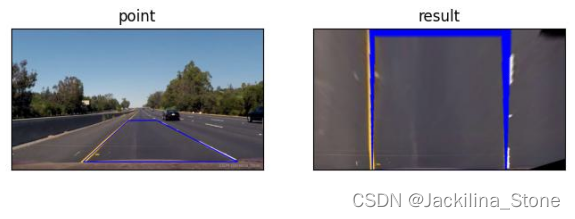

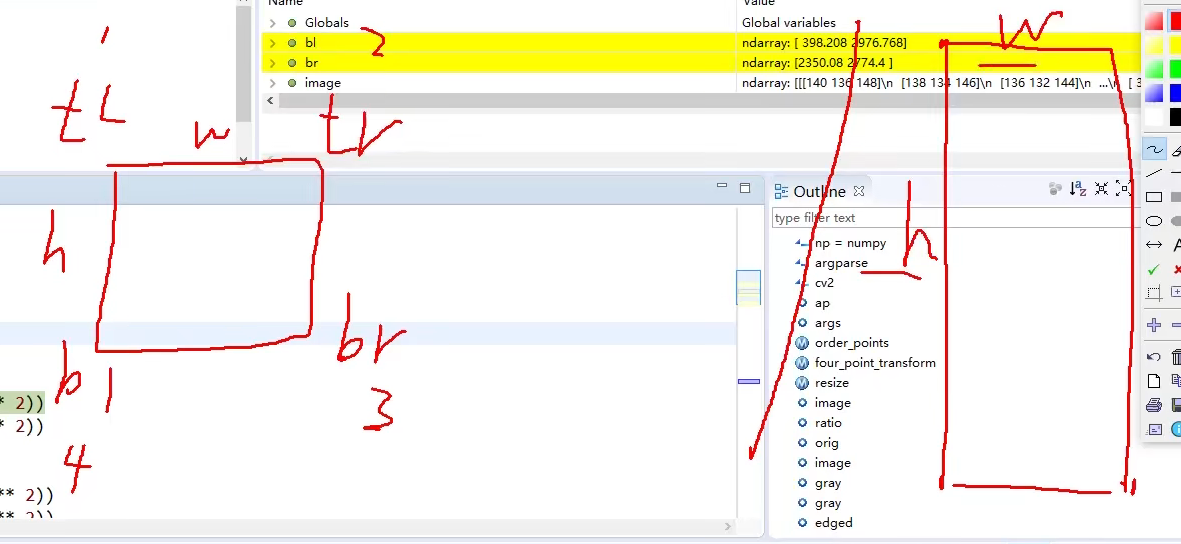

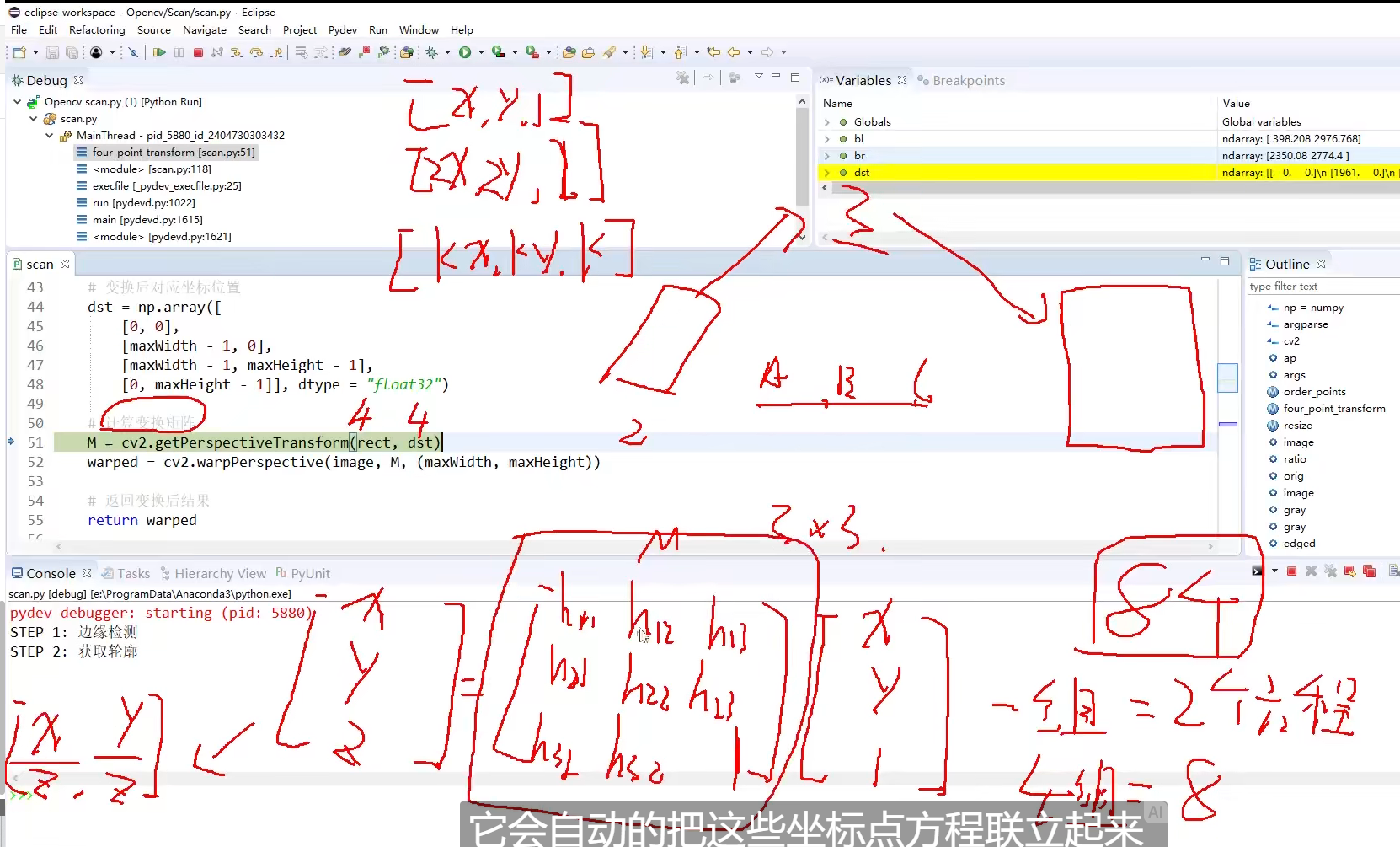

def four_point_transform(image, pts):# 获取输入坐标点rect = order_points(pts)(tl, tr, br, bl) = rect# 计算输入的w和h值widthA = np.sqrt(((br[0] - bl[0]) ** 2) + ((br[1] - bl[1]) ** 2))widthB = np.sqrt(((tr[0] - tl[0]) ** 2) + ((tr[1] - tl[1]) ** 2))maxWidth = max(int(widthA), int(widthB))heightA = np.sqrt(((tr[0] - br[0]) ** 2) + ((tr[1] - br[1]) ** 2))heightB = np.sqrt(((tl[0] - bl[0]) ** 2) + ((tl[1] - bl[1]) ** 2))maxHeight = max(int(heightA), int(heightB))# 变换后对应坐标位置dst = np.array([[0, 0],[maxWidth - 1, 0],[maxWidth - 1, maxHeight - 1],[0, maxHeight - 1]], dtype="float32")# 计算变换矩阵M = cv2.getPerspectiveTransform(rect, dst)warped = cv2.warpPerspective(image, M, (maxWidth, maxHeight))# 返回变换后结果return warped

pts 为轮廓检测后通过轮廓近似得到的四个点

# 遍历轮廓

for c in cnts:# 计算轮廓近似peri = cv2.arcLength(c, True)# C表示输入的点集# epsilon表示从原始轮廓到近似轮廓的最大距离,它是一个准确度参数# True表示封闭的approx = cv2.approxPolyDP(c, 0.02 * peri, True)# 4个点的时候就拿出来if len(approx) == 4:screenCnt = approxbreak

pts 的输入值即为 screenCnt.reshape(4, 2)

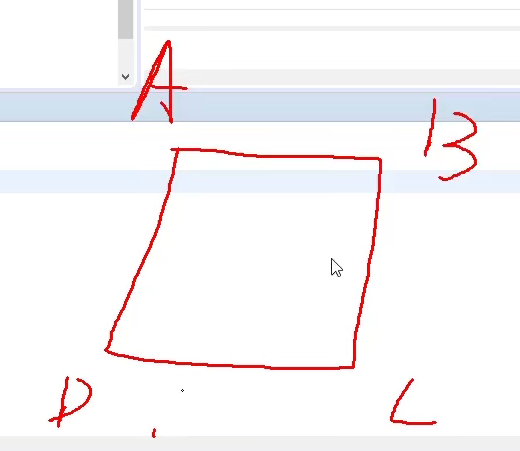

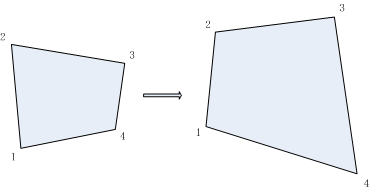

转换之前的坐标已有,需要转换之后的坐标。转换之后坐标(0, 0)已知,需要计算W和H。

只要基于两个点的距离计算,即可得出W和H。

做了近似不一定是个矩形,可能是个多边形,因而转换完之后取大值。

-1 是为了不出错

已知输入点和输出点,即可去计算当前变换的矩阵。

平移+旋转+翻转

二维到三维,只添加一个维度,不改变实际坐标值,一般都取1。

整体代码

# 导入工具包

import numpy as np

import argparse

import cv2def order_points(pts):# 一共4个坐标点rect = np.zeros((4, 2), dtype="float32")# 按顺序找到对应坐标0123分别是 左上,右上,右下,左下# 计算左上,右下s = pts.sum(axis=1)rect[0] = pts[np.argmin(s)]rect[2] = pts[np.argmax(s)]# 计算右上和左下diff = np.diff(pts, axis=1)rect[1] = pts[np.argmin(diff)]rect[3] = pts[np.argmax(diff)]return rectdef four_point_transform(image, pts):# 获取输入坐标点rect = order_points(pts)(tl, tr, br, bl) = rect# 计算输入的w和h值widthA = np.sqrt(((br[0] - bl[0]) ** 2) + ((br[1] - bl[1]) ** 2))widthB = np.sqrt(((tr[0] - tl[0]) ** 2) + ((tr[1] - tl[1]) ** 2))maxWidth = max(int(widthA), int(widthB))heightA = np.sqrt(((tr[0] - br[0]) ** 2) + ((tr[1] - br[1]) ** 2))heightB = np.sqrt(((tl[0] - bl[0]) ** 2) + ((tl[1] - bl[1]) ** 2))maxHeight = max(int(heightA), int(heightB))# 变换后对应坐标位置dst = np.array([[0, 0],[maxWidth - 1, 0],[maxWidth - 1, maxHeight - 1],[0, maxHeight - 1]], dtype="float32")# 计算变换矩阵M = cv2.getPerspectiveTransform(rect, dst)warped = cv2.warpPerspective(image, M, (maxWidth, maxHeight))# 返回变换后结果return warpeddef resize(image, width=None, height=None, inter=cv2.INTER_AREA):dim = None(h, w) = image.shape[:2]if width is None and height is None:return imageif width is None:r = height / float(h)dim = (int(w * r), height)else:r = width / float(w)dim = (width, int(h * r))resized = cv2.resize(image, dim, interpolation=inter)return resized# 读取输入

image = cv2.imread("flower.jpg")

# 坐标也会相同变化

ratio = image.shape[0] / 500.0

orig = image.copy()image = resize(orig, height=500)# 预处理

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

gray = cv2.GaussianBlur(gray, (5, 5), 0)

edged = cv2.Canny(gray, 75, 200)# 展示预处理结果

print("STEP 1: 边缘检测")

cv2.imshow("Image", image)

cv2.imshow("Edged", edged)

cv2.waitKey(0)

cv2.destroyAllWindows()# 轮廓检测

cnts = cv2.findContours(edged.copy(), cv2.RETR_LIST, cv2.CHAIN_APPROX_SIMPLE)[0]

cnts = sorted(cnts, key=cv2.contourArea, reverse=True)[:5]# 遍历轮廓

for c in cnts:# 计算轮廓近似peri = cv2.arcLength(c, True)# C表示输入的点集# epsilon表示从原始轮廓到近似轮廓的最大距离,它是一个准确度参数# True表示封闭的approx = cv2.approxPolyDP(c, 0.02 * peri, True)# 4个点的时候就拿出来if len(approx) == 4:screenCnt = approxbreak# 展示结果

print("STEP 2: 获取轮廓")

cv2.drawContours(image, [screenCnt], -1, (0, 255, 0), 2)

cv2.imshow("Outline", image)

cv2.waitKey(0)

cv2.destroyAllWindows()

# 透视变换

warped = four_point_transform(orig, screenCnt.reshape(4, 2) * ratio)# 二值处理

warped = cv2.cvtColor(warped, cv2.COLOR_BGR2GRAY)

ref = cv2.threshold(warped, 100, 255, cv2.THRESH_BINARY)[1]

cv2.imwrite('scan.jpg', ref)

# 展示结果

print("STEP 3: 变换")

cv2.imshow("Original", resize(orig, height=650))

cv2.imshow("Scanned", resize(ref, height=650))

cv2.waitKey(0)

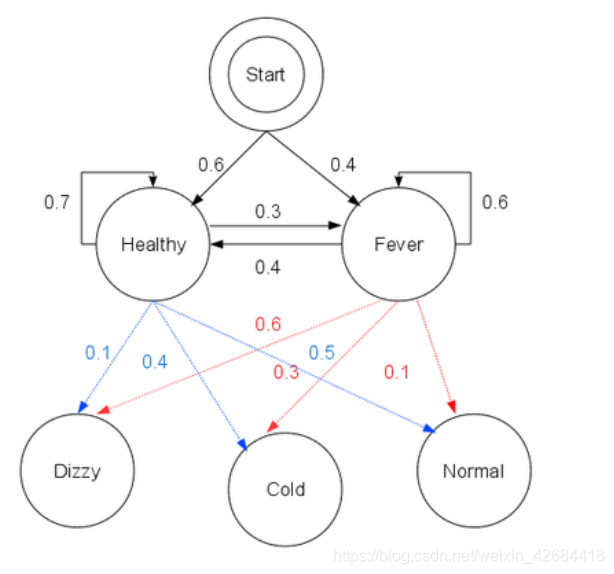

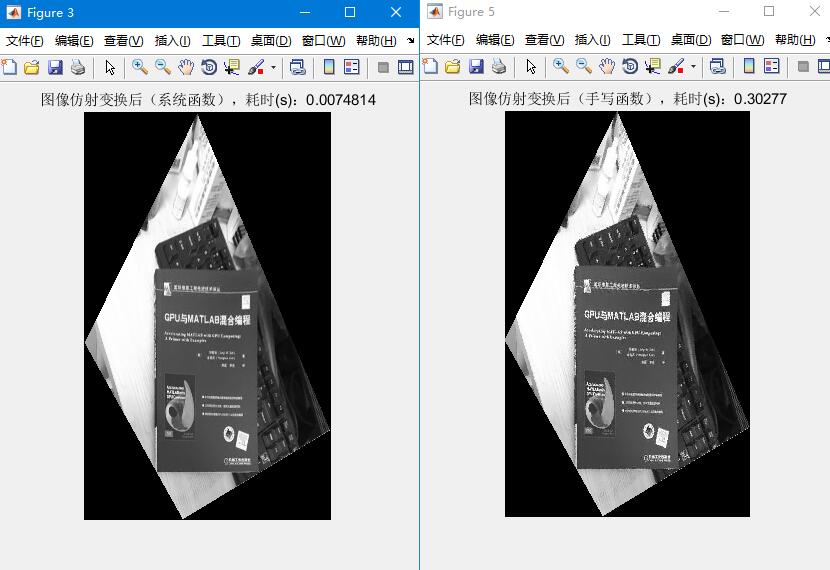

![[解疑]图像、矩阵的二维空间变换](https://img-blog.csdnimg.cn/20200311183346365.png#pic_center)