目录

1. Decoupled Head介绍

2.Yolov5加入Decoupled_Detect

2.1 DecoupledHead加入common.py中:

2.2 Decoupled_Detect加入yolo.py中:

2.3修改yolov5s_decoupled.yaml

3.数据集下验证性能

🏆 🏆🏆🏆🏆🏆🏆Yolov5/Yolov7成长师🏆🏆🏆🏆🏆🏆🏆

🍉🍉进阶专栏Yolov5/Yolov7魔术师:http://t.csdn.cn/D4NqB 🍉🍉

✨✨✨魔改网络、复现前沿论文,组合优化创新

🚀🚀🚀小目标、遮挡物、难样本性能提升

🌰 🌰 🌰在不同数据集验证能够涨点,对小目标涨点明显

1. Decoupled Head介绍

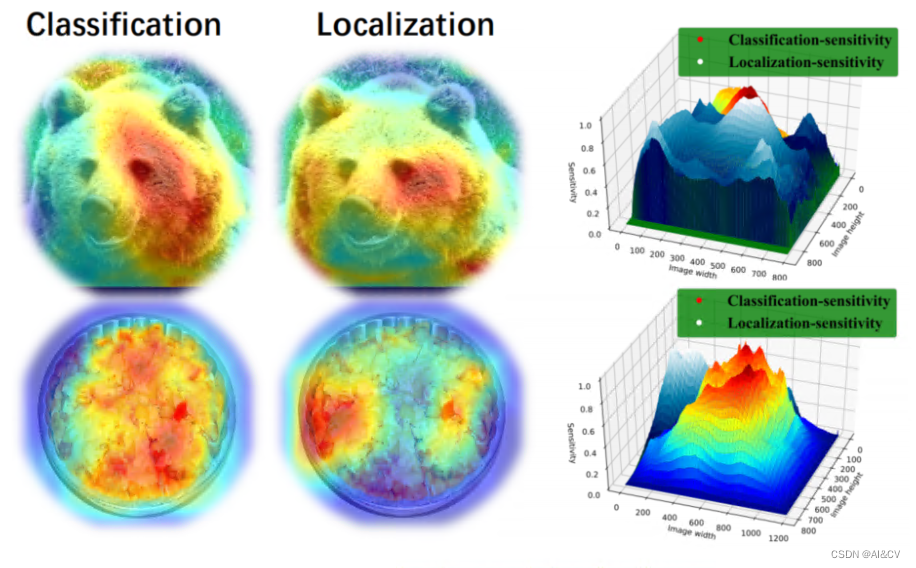

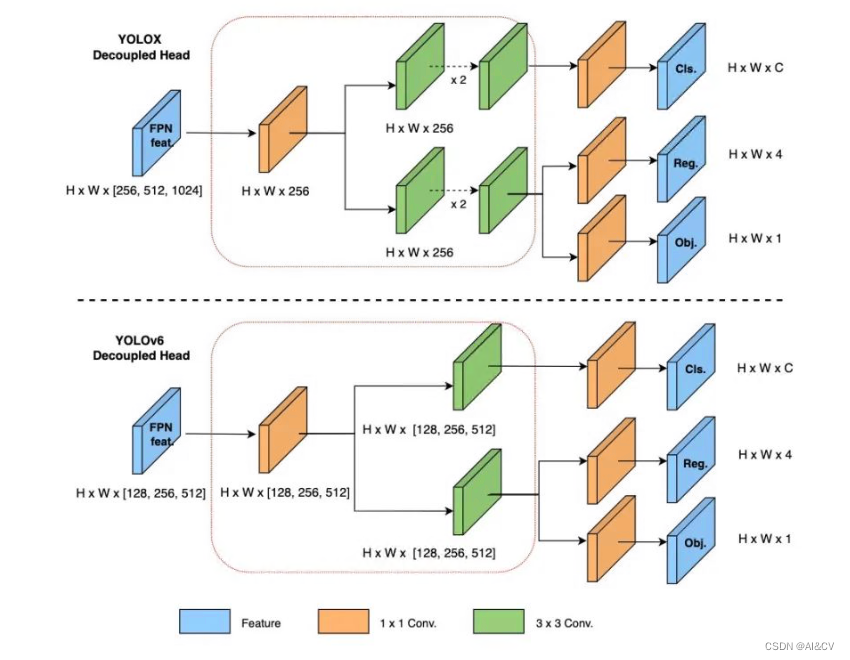

为什么要用到解耦头?

因为分类和定位的关注点不同;

分类更关注目标的纹理内容;

定位更关注目标的边缘信息;

YOLOv6 采用了解耦检测头(Decoupled Head)结构,同时综合考虑到相关算子表征能力和硬件上计算开销这两者的平衡,采用 Hybrid Channels 策略重新设计了一个更高效的解耦头结构,在维持精度的同时降低了延时,缓解了解耦头中 3x3 卷积带来的额外延时开销。

原始 YOLOv5 的检测头是通过分类和回归分支融合共享的方式来实现的,因此加入 Decoupled Head。

2.Yolov5加入Decoupled_Detect

2.1 DecoupledHead加入common.py中:

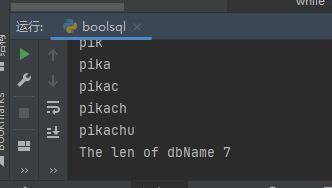

class DecoupledHead(nn.Module):def __init__(self, ch=256, nc=80, anchors=()):super().__init__()self.nc = nc # number of classesself.nl = len(anchors) # number of detection layersself.na = len(anchors[0]) // 2 # number of anchorsself.merge = Conv(ch, 256 , 1, 1)self.cls_convs1 = Conv(256 , 256 , 3, 1, 1)self.cls_convs2 = Conv(256 , 256 , 3, 1, 1)self.reg_convs1 = Conv(256 , 256 , 3, 1, 1)self.reg_convs2 = Conv(256 , 256 , 3, 1, 1)self.cls_preds = nn.Conv2d(256 , self.nc * self.na, 1)self.reg_preds = nn.Conv2d(256 , 4 * self.na, 1)self.obj_preds = nn.Conv2d(256 , 1 * self.na, 1)def forward(self, x):x = self.merge(x)x1 = self.cls_convs1(x)x1 = self.cls_convs2(x1)x1 = self.cls_preds(x1)x2 = self.reg_convs1(x)x2 = self.reg_convs2(x2)x21 = self.reg_preds(x2)x22 = self.obj_preds(x2)out = torch.cat([x21, x22, x1], 1)return out2.2 Decoupled_Detect加入yolo.py中:

class Decouple(nn.Module):# Decoupled convolutiondef __init__(self, c1, nc=80, na=3): # ch_in, num_classes, num_anchorssuper().__init__()c_ = min(c1, 256) # min(c1, nc * na)self.na = na # number of anchorsself.nc = nc # number of classesself.a = Conv(c1, c_, 1)c = [int(x + na * 5) for x in (c_ - na * 5) * torch.linspace(1, 0, 4)] # linear channel descentself.b1, self.b2, self.b3 = Conv(c_, c[1], 3), Conv(c[1], c[2], 3), nn.Conv2d(c[2], na * 5, 1) # vcself.c1, self.c2, self.c3 = Conv(c_, c_, 1), Conv(c_, c_, 1), nn.Conv2d(c_, na * nc, 1) # clsdef forward(self, x):bs, nc, ny, nx = x.shape # BCHWx = self.a(x)b = self.b3(self.b2(self.b1(x)))c = self.c3(self.c2(self.c1(x)))return torch.cat((b.view(bs, self.na, 5, ny, nx), c.view(bs, self.na, self.nc, ny, nx)), 2).view(bs, -1, ny, nx) class Decoupled_Detect(nn.Module):stride = None # strides computed during buildonnx_dynamic = False # ONNX export parameterexport = False # export modedef __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layersuper().__init__()self.nc = nc # number of classesself.no = nc + 5 # number of outputs per anchorself.nl = len(anchors) # number of detection layersself.na = len(anchors[0]) // 2 # number of anchorsself.grid = [torch.zeros(1)] * self.nl # init gridself.anchor_grid = [torch.zeros(1)] * self.nl # init anchor gridself.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2) self.m=nn.ModuleList(Decouple(x, self.nc, self.na) for x in ch) #yolov5 provide , old Decouple too much FLOPself.inplace = inplace # use in-place ops (e.g. slice assignment)def forward(self, x):z = [] # inference outputfor i in range(self.nl):x[i] = self.m[i](x[i]) # convbs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()if not self.training: # inferenceif self.onnx_dynamic or self.grid[i].shape[2:4] != x[i].shape[2:4]:self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)y = x[i].sigmoid()if self.inplace:y[..., 0:2] = (y[..., 0:2] * 2 + self.grid[i]) * self.stride[i] # xyy[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # whelse: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953xy, wh, conf = y.split((2, 2, self.nc + 1), 4) # y.tensor_split((2, 4, 5), 4) # torch 1.8.0xy = (xy * 2 + self.grid[i]) * self.stride[i] # xywh = (wh * 2) ** 2 * self.anchor_grid[i] # why = torch.cat((xy, wh, conf), 4)z.append(y.view(bs, -1, self.no))return x if self.training else (torch.cat(z, 1),) if self.export else (torch.cat(z, 1), x)def _make_grid(self, nx=20, ny=20, i=0, torch_1_10=check_version(torch.__version__, '1.10.0')):d = self.anchors[i].devicet = self.anchors[i].dtypeshape = 1, self.na, ny, nx, 2 # grid shapey, x = torch.arange(ny, device=d, dtype=t), torch.arange(nx, device=d, dtype=t)yv, xv = torch.meshgrid(y, x, indexing='ij') if torch_1_10 else torch.meshgrid(y, x) # torch>=0.7 compatibilitygrid = torch.stack((xv, yv), 2).expand(shape) - 0.5 # add grid offset, i.e. y = 2.0 * x - 0.5anchor_grid = (self.anchors[i] * self.stride[i]).view((1, self.na, 1, 1, 2)).expand(shape)return grid, anchor_gridclass BaseModel(nn.Module):

def _apply(self, fn):# Apply to(), cpu(), cuda(), half() to model tensors that are not parameters or registered buffersself = super()._apply(fn)m = self.model[-1] # Detect()if isinstance(m, (Detect, Segment,Decoupled_Detect)):m.stride = fn(m.stride)m.grid = list(map(fn, m.grid))if isinstance(m.anchor_grid, list):m.anchor_grid = list(map(fn, m.anchor_grid))return self

class DetectionModel(BaseModel):

def _initialize_dh_biases(self, cf=None): # initialize biases into Detect(), cf is class frequency# https://arxiv.org/abs/1708.02002 section 3.3# cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1.m = self.model[-1] # Detect() modulefor mi, s in zip(m.m, m.stride): # from# reg_bias = mi.reg_preds.bias.view(m.na, -1).detach()# reg_bias += math.log(8 / (640 / s) ** 2)# mi.reg_preds.bias = torch.nn.Parameter(reg_bias.view(-1), requires_grad=True)# cls_bias = mi.cls_preds.bias.view(m.na, -1).detach()# cls_bias += math.log(0.6 / (m.nc - 0.999999)) if cf is None else torch.log(cf / cf.sum()) # cls# mi.cls_preds.bias = torch.nn.Parameter(cls_bias.view(-1), requires_grad=True)b = mi.b3.bias.view(m.na, -1)b.data[:, 4] += math.log(8 / (640 / s) ** 2) # obj (8 objects per 640 image)mi.b3.bias = torch.nn.Parameter(b.view(-1), requires_grad=True)b = mi.c3.bias.datab += math.log(0.6 / (m.nc - 0.999999)) if cf is None else torch.log(cf / cf.sum()) # clsmi.c3.bias = torch.nn.Parameter(b, requires_grad=True) if isinstance(m, (Detect, Segment,ASFF_Detect)):s = 256 # 2x min stridem.inplace = self.inplaceforward = lambda x: self.forward(x)[0] if isinstance(m, Segment) else self.forward(x)m.stride = torch.tensor([s / x.shape[-2] for x in forward(torch.zeros(1, ch, s, s))]) # forwardcheck_anchor_order(m)m.anchors /= m.stride.view(-1, 1, 1)self.stride = m.strideself._initialize_biases() # only run onceelif isinstance(m, Decoupled_Detect):s = 256 # 2x min stridem.inplace = self.inplacem.stride = torch.tensor([s / x.shape[-2] for x in self.forward(torch.zeros(1, ch, s, s))]) # forwardcheck_anchor_order(m) # must be in pixel-space (not grid-space)m.anchors /= m.stride.view(-1, 1, 1)self.stride = m.strideself._initialize_dh_biases() # only run oncedef parse_model(d, ch): # model_dict, input_channels(3)

elif m in {Detect, Segment,Decoupled_Detect}:args.append([ch[x] for x in f])if isinstance(args[1], int): # number of anchorsargs[1] = [list(range(args[1] * 2))] * len(f)if m is Segment:args[3] = make_divisible(args[3] * gw, 8)2.3修改yolov5s_decoupled.yaml

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license# Parameters

nc: 1 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:- [10,13, 16,30, 33,23] # P3/8- [30,61, 62,45, 59,119] # P4/16- [116,90, 156,198, 373,326] # P5/32# YOLOv5 v6.0 backbone

backbone:# [from, number, module, args][[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2[-1, 1, Conv, [128, 3, 2]], # 1-P2/4[-1, 3, C3, [128]],[-1, 1, Conv, [256, 3, 2]], # 3-P3/8[-1, 6, C3, [256]],[-1, 1, Conv, [512, 3, 2]], # 5-P4/16[-1, 9, C3, [512]],[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32[-1, 3, C3, [1024]],[-1, 1, SPPF, [1024, 5]], # 9]# YOLOv5 v6.0 head

head:[[-1, 1, Conv, [512, 1, 1]],[-1, 1, nn.Upsample, [None, 2, 'nearest']],[[-1, 6], 1, Concat, [1]], # cat backbone P4[-1, 3, C3, [512, False]], # 13[-1, 1, Conv, [256, 1, 1]],[-1, 1, nn.Upsample, [None, 2, 'nearest']],[[-1, 4], 1, Concat, [1]], # cat backbone P3[-1, 3, C3, [256, False]], # 17 (P3/8-small)[-1, 1, Conv, [256, 3, 2]],[[-1, 14], 1, Concat, [1]], # cat head P4[-1, 3, C3, [512, False]], # 20 (P4/16-medium)[-1, 1, Conv, [512, 3, 2]],[[-1, 10], 1, Concat, [1]], # cat head P5[-1, 3, C3, [1024, False]], # 23 (P5/32-large)[[17, 20, 23], 1, Decoupled_Detect, [nc, anchors]], # Detect(P3, P4, P5),解耦]

3.数据集下验证性能

基于Yolov5的道路缺陷识别,加入ASFF和EVC优化_AI&CV的博客-CSDN博客