创建各版本的ResNet模型,ResNet18,ResNet34,ResNet50,ResNet101,ResNet152

原文地址: https://arxiv.org/pdf/1512.03385.pdf

论文就不解读了,大部分解读都是翻译,看的似懂非懂,自己搞懂就行了。

最近想着实现一下经典的网络结构,看了原文之后,根据原文代码结构开始实现。

起初去搜了下各种版本的实现,发现很多博客都是错误百出,有些博文都发布几年了,错误还是没人发现,评论区几十号人不知道是真懂还是装懂,颇有些无奈啊。

因此打算自己手动实现网络结构,锻炼下自己的代码能力,也加深对网络结构的理解。

写完之后也很欣慰,毕竟一直认为自己是个菜鸡,最近竟然接连不断的发现很多博文的错误之处,而且很多人看后都没发现的,想想自己似乎还有点小水平。

最后在一套代码里,实现了各版本ResNet,为了方便。

其实最后还是觉得应该每个网络分开写比较好。因为不同版本的网络内部操作是有很大差异的,本文下面的代码是将ResidualBlock和 BottleNeckBlock分开写的,但是在维度的变换上差异还是很复杂,一方面想提高代码的复用性,另一方面也受制于复杂度。所以最后写出的算不上高复用性的精简代码。勉强能用。关于ResNet的结构,除各版本分开写之外,重复的block其实也可以分开写,因为BottleNeckBlock的维度变换太复杂,参数变换多,能分开就分开,复杂度小的地方可以复用。

以下是网络结构和实现代码,检验后都是对的;水平有限,如发现有错误,欢迎评论告知!

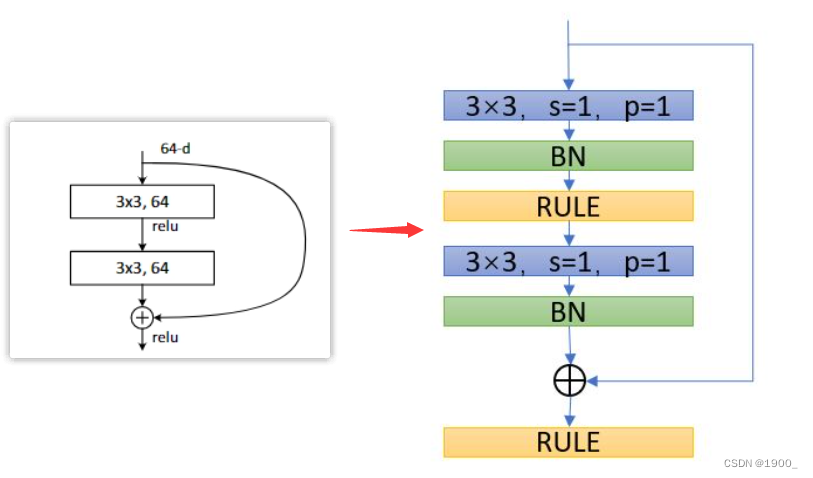

1 残差结构图

2 VGG-19与ResNet34结构比较

3 ResNet各版本的结构

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-qLEx5XJg-1602504703995)(C:\Users\tony\AppData\Roaming\Typora\typora-user-images\image-20201012200856046.png)]](https://img-blog.csdnimg.cn/20201012203126776.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3dlaXhpbl80NDI3NzI4MA==,size_16,color_FFFFFF,t_70#pic_center)

4 代码实现各版本

import torch.nn as nn

from torch.nn import functional as Fclass ResNetModel(nn.Module):"""实现通用的ResNet模块,可根据需要定义"""def __init__(self, num_classes=1000, layer_num=[],bottleneck = False):super(ResNetModel, self).__init__()#conv1self.pre = nn.Sequential(#in 224*224*3nn.Conv2d(3,64,7,2,3,bias=False), #输入通道3,输出通道64,卷积核7*7*64,步长2,根据以上计算出padding=3#out 112*112*64nn.BatchNorm2d(64), #输入通道C = 64nn.ReLU(inplace=True), #inplace=True, 进行覆盖操作# out 112*112*64nn.MaxPool2d(3,2,1), #池化核3*3,步长2,计算得出padding=1;# out 56*56*64)if bottleneck: #resnet50以上使用BottleNeckBlockself.residualBlocks1 = self.add_layers(64, 256, layer_num[0], 64, bottleneck=bottleneck)self.residualBlocks2 = self.add_layers(128, 512, layer_num[1], 256, 2,bottleneck)self.residualBlocks3 = self.add_layers(256, 1024, layer_num[2], 512, 2,bottleneck)self.residualBlocks4 = self.add_layers(512, 2048, layer_num[3], 1024, 2,bottleneck)self.fc = nn.Linear(2048, num_classes)else: #resnet34使用普通ResidualBlockself.residualBlocks1 = self.add_layers(64,64,layer_num[0])self.residualBlocks2 = self.add_layers(64,128,layer_num[1])self.residualBlocks3 = self.add_layers(128,256,layer_num[2])self.residualBlocks4 = self.add_layers(256,512,layer_num[3])self.fc = nn.Linear(512, num_classes)def add_layers(self, inchannel, outchannel, nums, pre_channel=64, stride=1, bottleneck=False):layers = []if bottleneck is False:#添加大模块首层, 首层需要判断inchannel == outchannel ?#跨维度需要stride=2,shortcut也需要1*1卷积扩维layers.append(ResidualBlock(inchannel,outchannel))#添加剩余nums-1层for i in range(1,nums):layers.append(ResidualBlock(outchannel,outchannel))return nn.Sequential(*layers)else: #resnet50使用bottleneck#传递每个block的shortcut,shortcut可以根据是否传递pre_channel进行推断#添加首层,首层需要传递上一批blocks的channellayers.append(BottleNeckBlock(inchannel,outchannel,pre_channel,stride))for i in range(1,nums): #添加n-1个剩余blocks,正常通道转换,不传递pre_channellayers.append(BottleNeckBlock(inchannel,outchannel))return nn.Sequential(*layers)def forward(self, x):x = self.pre(x)x = self.residualBlocks1(x)x = self.residualBlocks2(x)x = self.residualBlocks3(x)x = self.residualBlocks4(x)x = F.avg_pool2d(x, 7)x = x.view(x.size(0), -1)return self.fc(x)class ResidualBlock(nn.Module):'''定义普通残差模块resnet34为普通残差块,resnet50为瓶颈结构'''def __init__(self, inchannel, outchannel, stride=1, padding=1, shortcut=None):super(ResidualBlock, self).__init__()#resblock的首层,首层如果跨维度,卷积stride=2,shortcut需要1*1卷积扩维if inchannel != outchannel:stride= 2shortcut=nn.Sequential(nn.Conv2d(inchannel,outchannel,1,stride,bias=False),nn.BatchNorm2d(outchannel))# 定义残差块的左部分self.left = nn.Sequential(nn.Conv2d(inchannel, outchannel, 3, stride, padding, bias=False),nn.BatchNorm2d(outchannel),nn.ReLU(inplace=True),nn.Conv2d(outchannel, outchannel, 3, 1, padding, bias=False),nn.BatchNorm2d(outchannel),)#定义右部分self.right = shortcutdef forward(self, x):out = self.left(x)residual = x if self.right is None else self.right(x)out = out + residualreturn F.relu(out)class BottleNeckBlock(nn.Module):'''定义resnet50的瓶颈结构'''def __init__(self,inchannel,outchannel, pre_channel=None, stride=1,shortcut=None):super(BottleNeckBlock, self).__init__()#首个bottleneck需要承接上一批blocks的输出channelif pre_channel is None: #为空则表示不是首个bottleneck,pre_channel = outchannel #正常通道转换else: # 传递了pre_channel,表示为首个block,需要shortcutshortcut = nn.Sequential(nn.Conv2d(pre_channel,outchannel,1,stride,0,bias=False),nn.BatchNorm2d(outchannel))self.left = nn.Sequential(#1*1,inchannelnn.Conv2d(pre_channel, inchannel, 1, stride, 0, bias=False),nn.BatchNorm2d(inchannel),nn.ReLU(inplace=True),#3*3,inchannelnn.Conv2d(inchannel,inchannel,3,1,1,bias=False),nn.BatchNorm2d(inchannel),nn.ReLU(inplace=True),#1*1,outchannelnn.Conv2d(inchannel,outchannel,1,1,0,bias=False),nn.BatchNorm2d(outchannel),nn.ReLU(inplace=True),)self.right = shortcutdef forward(self,x):out = self.left(x)residual = x if self.right is None else self.right(x)return F.relu(out+residual)if __name__ == '__main__':# channel_nums = [64,128,256,512,1024,2048]num_classes = 6#layers = 18, 34, 50, 101, 152layer_nums = [[2,2,2,2],[3,4,6,3],[3,4,6,3],[3,4,23,3],[3,8,36,3]]#选择resnet版本,# resnet18 ——0;resnet34——1,resnet-50——2,resnet-101——3,resnet-152——4i = 3;bottleneck = i >= 2 #i<2, false,使用普通的ResidualBlock; i>=2,true,使用BottleNeckBlockmodel = ResNetModel(num_classes,layer_nums[i],bottleneck)print(model)