© 作者|王晓磊

机构|中国人民大学高瓴人工智能学院博士生

导师|赵鑫教授

研究方向 | 对话系统

1. 引言

近年来,以 BERT 和 GPT 系列为代表的大规模预训练语言模型(Pre-trained Language Model, PLM)在 NLP 的各个领域取得了巨大成功。本文整理了自 BERT 和 GPT 诞生以来与 PLM 相关的论文,根据引用数筛选出163篇具有代表性的工作,并按照综述、基准数据集、PLM的设计、PLM的分析、高效的PLM和PLM的使用六大类型进行了初步划分。

本文整理的论文列表已经同步更新到 GitHub,也会进行持续的更新,欢迎大家关注和 Star。

https://github.com/RUCAIBox/PLMPapers

本文尽可能地在每篇论文的后面附上了 PDF 链接、代码实现和项目主页,以方便读者进一步了解相关工作。

2. 综述

"Pre-trained models for natural language processing: A survey".

Science China Technological Sciences(2020)"Which *BERT? A Survey Organizing Contextualized Encoders".

EMNLP(2020)"A Primer in BERTology: What We Know About How BERT Works".

TACL(2020)"From static to dynamic word representations: a survey".

International Journal of Machine Learning and Cybernetics(2020)"Overview of the Transformer-based Models for NLP Tasks".

2020 15th Conference on Computer Science and Information Systems (FedCSIS)"A Survey on Contextual Embeddings".

arXiv(2020)"The NLP Cookbook: Modern Recipes for Transformer Based Deep Learning Architectures".

IEEE Access(2021)"Pre-Trained Models: Past, Present and Future".

arXiv(2021)"A Survey of Transformers".

arXiv(2021)

3. 基准数据集

XNLI: "XNLI: Evaluating Cross-lingual Sentence Representations".

EMNLP(2018)GLUE: "GLUE: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding".

ICLR(2019)SuperGLUE: "SuperGLUE: A Stickier Benchmark for General-Purpose Language Understanding Systems".

NeurIPS(2019)CLUE: "CLUE: A Chinese Language Understanding Evaluation Benchmark".

COLING(2020)XTREME: "XTREME: A Massively Multilingual Multi-task Benchmark for Evaluating Cross-lingual Generalization".

ICML(2020)XGLUE: "XGLUE: A New Benchmark Dataset for Cross-lingual Pre-training, Understanding and Generation".

EMNLP(2020)DialoGLUE: "DialoGLUE: A Natural Language Understanding Benchmark for Task-Oriented Dialogue".

arXiv(2020)

4. PLM的设计

4.1 通用设计

GPT: "Improving Language Understanding by Generative Pre-Training".

OpenAI(2018)GPT-2: "Language Models are Unsupervised Multitask Learners".

OpenAI(2019)BERT: "BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding".

NAACL(2019)XLNet: "XLNet: Generalized Autoregressive Pretraining for Language Understanding".

NeurIPS(2019)SBERT: "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks".

ACL(2019)UniLM: "Unified Language Model Pre-training for Natural Language Understanding and Generation".

NeurIPS(2019)MASS: "MASS: Masked Sequence to Sequence Pre-training for Language Generation".

ICML(2019)Chinese-BERT-wwm: "Pre-Training with Whole Word Masking for Chinese BERT".

arXiv(2019)"Cloze-driven Pretraining of Self-attention Networks".

EMNLP(2019)"BERT has a Mouth, and It Must Speak: BERT as a Markov Random Field Language Model".

Workshop on Methods for Optimizing and Evaluating Neural Language Generation(2019)GPT-3: "Language Models are Few-Shot Learners".

arXiv(2020)T5: "Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer".

JMLR(2020)BART: "BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension".

ACL(2020)Poly-encoders: "Poly-encoders: Architectures and Pre-training Strategies for Fast and Accurate Multi-sentence Scoring".

ICLR(2020)SpanBERT: "SpanBERT: Improving Pre-training by Representing and Predicting Spans".

TACL(2020)ERNIE 2.0: "ERNIE 2.0: A Continual Pre-Training Framework for Language Understanding".

AAAI(2020)SemBERT: "Semantics-Aware BERT for Language Understanding".

AAAI(2020)"Leveraging Pre-trained Checkpoints for Sequence Generation Tasks".

TACL(2020)ProphetNet: "ProphetNet: Predicting Future N-gram for Sequence-to-SequencePre-training".

EMNLP(2020)UniLMv2: "UniLMv2: Pseudo-Masked Language Models for Unified Language Model Pre-Training".

ICML(2020)MacBERT: "Revisiting Pre-Trained Models for Chinese Natural Language Processing".

EMNLP(2020)MPNet: "MPNet: Masked and Permuted Pre-training for Language Understanding".

arXiv(2020)DEBERTA: "DeBERTa: Decoding-enhanced BERT with Disentangled Attention".

ICLR(2021)PALM: "PALM: Pre-training an Autoencoding&Autoregressive Language Model for Context-conditioned Generation".

EMNLP(2020)

4.2 知识增强

ERNIE(Baidu): "ERNIE: Enhanced Representation through Knowledge Integration".

arXiv(2019)KnowBert: "Knowledge Enhanced Contextual Word Representations".

EMNLP(2019)ERNIE(Tsinghua): "ERNIE: Enhanced Language Representation with Informative Entities".

ACL(2019)COMET: "COMET: Commonsense Transformers for Automatic Knowledge Graph Construction".

ACL(2019)K-BERT: "K-BERT: Enabling Language Representation with Knowledge Graph".

AAAI(2020)WKLM: "Pretrained Encyclopedia: Weakly Supervised Knowledge-Pretrained Language Model".

ICLR(2020)LUKE: "LUKE: Deep Contextualized Entity Representations with Entity-aware Self-attention".

EMNLP(2020)K-Adapter: "K-Adapter: Infusing Knowledge into Pre-Trained Models with Adapters".

ICLR(2021)KEPLER: "KEPLER: A Unified Model for Knowledge Embedding and Pre-trained Language Representation".

TACL(2021)

4.3 多语言

XLM: "Cross-lingual Language Model Pretraining".

arXiv(2019)"Massively Multilingual Sentence Embeddings for Zero-Shot Cross-Lingual Transfer and Beyond".

TACL(2019)UDify: "75 Languages, 1 Model: Parsing Universal Dependencies Universally".

EMNLP(2019)Unicoder: "Unicoder: A Universal Language Encoder by Pre-training with Multiple Cross-lingual Tasks".

EMNLP(2019)XLM-R: "Unsupervised Cross-lingual Representation Learning at Scale".

ACL(2020)"Multilingual Alignment of Contextual Word Representations".

ICLR(2020)mBART: "Multilingual Denoising Pre-training for Neural Machine Translation".

TACL(2020)mT5: "mT5: A Massively Multilingual Pre-trained Text-to-Text Transformer".

NAACL(2021)InfoXLM: "InfoXLM: An Information-Theoretic Framework for Cross-Lingual Language Model Pre-Training".

NAACL(2021)

4.4 多模态

ViLBERT: "ViLBERT: Pretraining Task-Agnostic Visiolinguistic Representations for Vision-and-Language Tasks".

NeuralIPS(2019)LXMERT: "LXMERT: Learning Cross-Modality Encoder Representations from Transformers".

EMNLP(2019)VideoBERT: "VideoBERT: A Joint Model for Video and Language Representation Learning"

ICCV(2019)MulT: "Multimodal Transformer for Unaligned Multimodal Language Sequences".

ACL(2019)VisualBERT: "VisualBERT: A Simple and Performant Baseline for Vision and Language".

arXiv(2019)B2T2: "Fusion of Detected Objects in Text for Visual Question Answering".

EMNLP(2019)VL-BERT: "VL-BERT: Pre-training of Generic Visual-Linguistic Representations".

ICLR(2020)Unicoder-VL: "Unicoder-VL: A Universal Encoder for Vision and Language by Cross-Modal Pre-Training".

AAAI(2020)VLP: "Unified Vision-Language Pre-Training for Image Captioning and VQA".

AAAI(2020)UNITER: "UNITER: UNiversal Image-TExt Representation Learning".

ECCV(2020)Oscar: "Oscar: Object-Semantics Aligned Pre-training for Vision-Language Tasks".

ECCV(2020)"12-in-1: Multi-Task Vision and Language Representation Learning".

CVPR(2020)ActBERT: "ActBERT: Learning Global-Local Video-Text Representations".

CVPR(2020)VLN: "Vision-Language Navigation With Self-Supervised Auxiliary Reasoning Tasks".

CVPR(2020)VILLA: "Large-Scale Adversarial Training for Vision-and-Language Representation Learning".

arXiv(2020)ImageBERT: "ImageBERT: Cross-modal Pre-training with Large-scale Weak-supervised Image-Text Data".

arXiv(2020)ALIGN: "Scaling Up Visual and Vision-Language Representation Learning With Noisy Text Supervision".

ICML(2021)ClipBERT: "Less Is More: ClipBERT for Video-and-Language Learning via Sparse Sampling".

CVPR(2021)DALL·E: "Zero-Shot Text-to-Image Generation".

arXiv(2021)CLIP: "Learning Transferable Visual Models From Natural Language Supervision".

arXiv(2021)

4.5 信息检索

ORQA: "Latent Retrieval for Weakly Supervised Open Domain Question Answering".

ACL(2019)REALM: "REALM: Retrieval-Augmented Language Model Pre-Training".

arXiv(2020)RAG: "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks".

NeurIPS(2020)DPR: "Dense Passage Retrieval for Open-Domain Question Answering".

EMNLP(2020)

5. PLM的分析

5.1 知识

"What Does BERT Look at? An Analysis of BERT’s Attention".

BlackBoxNLP(2019)"BERT Rediscovers the Classical NLP Pipeline".

ACL(2019)"How Multilingual is Multilingual BERT?".

ACL(2019)"A Structural Probe for Finding Syntax in Word Representations".

NAACL(2019)"Language Models as Knowledge Bases?".

EMNLP(2019)"What Does BERT Learn about the Structure of Language?".

ACL(2019)"Linguistic Knowledge and Transferability of Contextual Representations".

NAACL(2019)"Assessing BERT's Syntactic Abilities".

arXiv(2019)"Probing Neural Network Comprehension of Natural Language Arguments"

ACL(2019)"How Contextual are Contextualized Word Representations? Comparing the Geometry of BERT, ELMo, and GPT-2 Embeddings".

EMNLP(2019)"Visualizing and Measuring the Geometry of BERT".

NeurIPS(2019)"Designing and Interpreting Probes with Control Tasks".

EMNLP(2019)"Open Sesame: Getting inside BERT’s Linguistic Knowledge".

BlackboxNLP(2019)"What do you learn from context? Probing for sentence structure in contextualized word representations".

ICLR(2019)"Commonsense Knowledge Mining from Pretrained Models".

EMNLP(2019)"Do NLP Models Know Numbers? Probing Numeracy in Embeddings".

EMNLP(2019)"On the Cross-lingual Transferability of Monolingual Representations".

ACL(2020)"Cross-Lingual Ability of Multilingual BERT: An Empirical Study".

ICLR(2020)"What BERT Is Not: Lessons from a New Suite of Psycholinguistic Diagnostics for Language Models".

TACL(2020)"How Much Knowledge Can You Pack Into the Parameters of a Language Model?".

EMNLP(2020)"How Can We Know What Language Models Know?".

TACL(2020)"oLMpics-On What Language Model Pre-training Captures".

TACL(2020)"Information-Theoretic Probing with Minimum Description Length".

EMNLP(2020)"Inducing Relational Knowledge from BERT".

AAAI(2020)AutoPrompt: "AutoPrompt: Eliciting Knowledge from Language Models with Automatically Generated Prompts".

EMNLP(2020)"Emergent linguistic structure in artificial neural networks trained by self-supervision".

PNAS(2020)"Evaluating Commonsense in Pre-Trained Language Models".

AAAI(2020)"Inducing Relational Knowledge from BERT".

AAAI(2020)

5.2 鲁棒性

"Universal Adversarial Triggers for Attacking and Analyzing NLP".

EMNLP(2019)"Pretrained Transformers Improve Out-of-Distribution Robustness".

ACL(2020)BERT-ATTACK: "BERT-ATTACK: Adversarial Attack Against BERT Using BERT".

EMNLP(2020)"Is BERT Really Robust? A Strong Baseline for Natural Language Attack on Text Classification and Entailment".

AAAI(2020)

5.3 稀疏性

"Are Sixteen Heads Really Better than One?".

NeurIPS(2019)"Analyzing Multi-Head Self-Attention: Specialized Heads Do the Heavy Lifting, the Rest Can Be Pruned".

ACL(2019)"Revealing the Dark Secrets of BERT".

EMNLP(2019)"The Lottery Ticket Hypothesis for Pre-trained BERT Networks".

NeurIPS(2020)"When BERT Plays the Lottery, All Tickets Are Winning".

EMNLP(2020)

5.4 其他

"Scaling Laws for Neural Language Models".

arXiv(2020)"Extracting Training Data from Large Language Models".

arXiv(2020)

6. 高效的PLM

6.1 模型训练

RoBERTa: "RoBERTa: A Robustly Optimized BERT Pretraining Approach".

arXiv(2019)"Efficient Training of BERT by Progressively Stacking".

ICML(2019)Megatron-LM: "Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism".

arXiv(2019)ELECTRA: "ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators".

ICLR(2020)"Large Batch Optimization for Deep Learning: Training BERT in 76 minutes".

ICLR(2020)GShard: "GShard: Scaling Giant Models with Conditional Computation and Automatic Sharding".

arXiv(2020)Admin: "Understanding the Difficulty of Training Transformers".

EMNLP(2020)ZeRO: "ZeRO: Memory optimizations Toward Training Trillion Parameter Models".

SC20: International Conference for High Performance Computing, Networking, Storage and AnalysisSwitch Transformers: "Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity".

arXiv(2021)

6.2 模型压缩

DistilBERT: "DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter".

arXiv(2019)PKD: "Patient Knowledge Distillation for BERT Model Compression".

EMNLP(2019)"Distilling Task-Specific Knowledge from BERT into Simple Neural Networks".

arXiv(2019)Q8BERT: "Q8BERT: Quantized 8Bit BERT".

5th Workshop on Energy Efficient Machine Learning and Cognitive Computing - NeurIPS 2019ALBERT: "ALBERT: A Lite BERT for Self-supervised Learning of Language Representations".

ICLR(2020)TinyBERT: "TinyBERT: Distilling BERT for Natural Language Understanding".

EMNLP(2020)Layerdrop: "Reducing Transformer Depth on Demand with Structured Dropout".

ICLR(2020)Q-BERT: "Q-BERT: Hessian Based Ultra Low Precision Quantization of BERT".

AAAI(2020)MobileBERT: "MobileBERT: a Compact Task-Agnostic BERT for Resource-Limited Devices".

ACL(2020)"Compressing BERT: Studying the Effects of Weight Pruning on Transfer Learning".

5th Workshop on Representation Learning for NLP(2020)MiniLM: "MiniLM: Deep Self-Attention Distillation for Task-Agnostic Compression of Pre-Trained Transformers".

arXiv(2020)FastBERT: "FastBERT: a Self-distilling BERT with Adaptive Inference Time".

ACL(2020)DeeBERT: "DeeBERT: Dynamic Early Exiting for Accelerating BERT Inference".

ACL(2020)

7. PLM的使用

7.1 两阶段

"Sentence Encoders on STILTs: Supplementary Training on Intermediate Labeled-data Tasks".

arXiv(2018)"How to Fine-Tune BERT for Text Classification?".

CCL(2019)"Don’t Stop Pretraining: Adapt Language Models to Domains and Tasks".

ACL(2020)"Intermediate-Task Transfer Learning with Pretrained Language Models: When and Why Does It Work?".

ACL(2020)

7.2 多任务

MT-DNN: "Multi-Task Deep Neural Networks for Natural Language Understanding".

ACL(2019)"BAM! Born-Again Multi-Task Networks for Natural Language Understanding".

ACL(2019)"Improving Multi-Task Deep Neural Networks via Knowledge Distillation for Natural Language Understanding".

arXiv(2019)

7.3 Adapter

"BERT and PALs: Projected Attention Layers for Efficient Adaptation in Multi-Task Learning".

ICML(2019)Adapter: "Parameter-Efficient Transfer Learning for NLP".

ICML(2019)

7.4 Prompt

PET: "Exploiting Cloze-Questions for Few-Shot Text Classification and Natural Language Inference".

EACL(2021)"It’s Not Just Size That Matters: Small Language Models Are Also Few-Shot Learners".

NAACL(2021)"Prefix-Tuning: Optimizing Continuous Prompts for Generation".

arXiv(2021)LM-BFF: "Making Pre-trained Language Models Better Few-shot Learners".

ACL(2021)"What Makes Good In-Context Examples for GPT-3?".

arXiv(2021)"The Power of Scale for Parameter-Efficient Prompt Tuning".

arXiv(2021)

7.5 其他

"To Tune or Not to Tune? Adapting Pretrained Representations to Diverse Tasks".

RepL4NLP(2019)"An Embarrassingly Simple Approach for Transfer Learning from Pretrained Language Models".

NAACL(2019)"Fine-Tuning Pretrained Language Models: Weight Initializations, Data Orders, and Early Stopping".

arXiv(2020)SMART: "SMART: Robust and Efficient Fine-Tuning for Pre-trained Natural Language Models through Principled Regularized Optimization".

EMNLP(2020)"Revisiting Few-sample BERT Fine-tuning".

ICLR(2021)

- END -

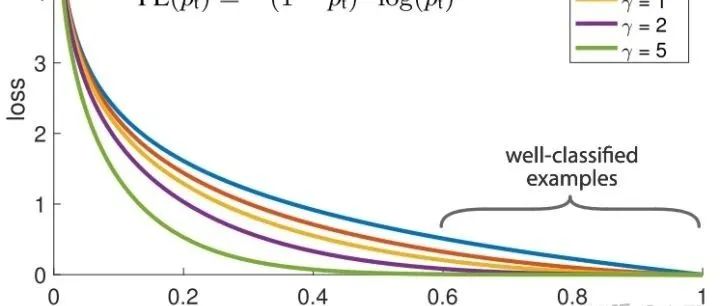

Focal Loss --- 从直觉到实现

2021-07-28

ACL2021最佳论文VOLT:通过最优转移进行词表学习

2021-07-26

动荡下如何自救 | 社招一年收割BATDK算法offer

2021-07-27

谷歌出品!机器学习常用术语总结

2021-07-24