很长时间没有更新内容了,上一篇可以看做是刚接触深度学习写的,看法非常狭隘,内容非常粗糙。

在最近的学习中接触到了Pytorch,不得不承认,相对于TensorFlow来讲,灵活很多。

这次就使用pytroch来进行一下交通流预测,数据和上一篇文章数据一样。

百度网盘: https://pan.baidu.com/s/19vKN2eZZPbOg36YEWts4aQ

密码 4uh7

准备开始

目录

- 加载数据

- 构造数据集

- 构造Dataset

- 生成训练数据

- 构造LSTM模型

- 定义模型和损失函数

- 模型预测

- 模型评价

- 完整代码

加载数据

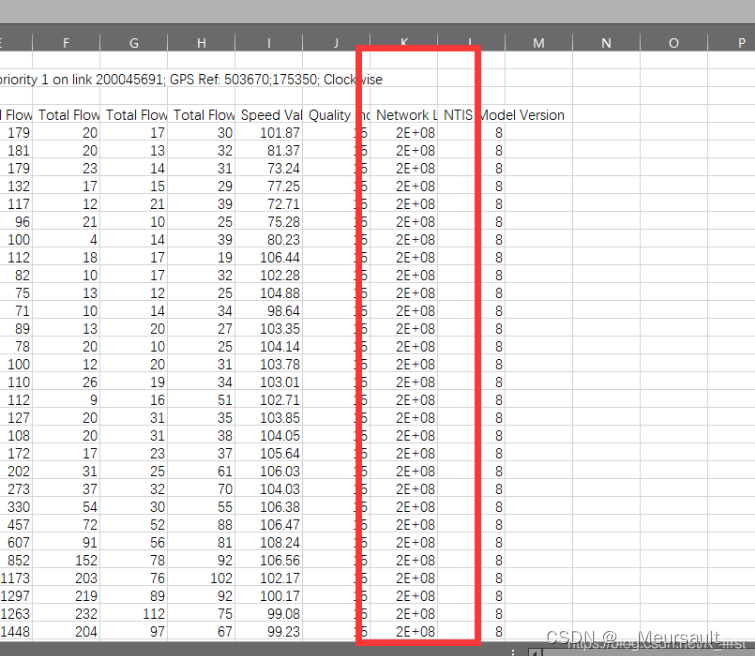

导入数据的时候,需要把Network这一列删除

f = pd.read_csv('..\Desktop\AE86.csv')

# 从新设置列标

def set_columns():columns = []for i in f.loc[2]:columns.append(i.strip())return columns

f.columns = set_columns()

f.drop([0,1,2], inplace = True)

# 读取数据

data = f['Total Carriageway Flow'].astype(np.float64).values[:, np.newaxis]

data.shape # (2880, 1)

构造数据集

pytorch对于Batch输入,提供了规范的划分数据的模块, Dataset 和 DataLoader,这两个是很多刚使用pytorch的朋友头疼的地方,我简单说一下就不专门讲太多了可以去官网或者其他博客看一下

Dataset相当于装东西的大箱子,比如装苹果的箱子,一个大箱子里可以有很多小盒子,小盒子里装着苹果,不同的大箱子里小盒子装的苹果个数可以是不同的

Dataloader相当于使用什么方法在大箱子里拿盒子,比如,可以按顺序一次拿10个小盒子或者随机的拿5个小盒子

但这种使用Dataset和DataLoader创建数据集并不是唯一的方法,比如也可以使用上篇文章中使用的方法直接形成数据

当然,既然是使用pytorch那就使用它的特色吧,下面基于以上Dataset的方法,我们就可以构造自己的数据集

定义自己的Dataset比较麻烦, Dataset必须包括两部分:len 和 getitem

len 用来统计生成Dataset的长度

getitem 是可以index来获取相应的数据

同时为了方便,这Dataset中,增加了数据标准化以及反标准化的过程

构造Dataset

class LoadData(Dataset):def __init__(self, data, time_step, divide_days, train_mode):self.train_mode = train_modeself.time_step = time_stepself.train_days = divide_days[0]self.test_days = divide_days[1]self.one_day_length = int(24 * 4)# flow_norm (max_data. min_data)self.flow_norm, self.flow_data = LoadData.pre_process_data(data)# 不进行标准化

# self.flow_data = datadef __len__(self, ):if self.train_mode == "train":return self.train_days * self.one_day_length - self.time_stepelif self.train_mode == "test":return self.test_days * self.one_day_lengthelse:raise ValueError(" train mode error")def __getitem__(self, index):if self.train_mode == "train":index = indexelif self.train_mode == "test":index += self.train_days * self.one_day_lengthelse:raise ValueError(' train mode error')data_x, data_y = LoadData.slice_data(self.flow_data, self.time_step, index,self.train_mode)data_x = LoadData.to_tensor(data_x)data_y = LoadData.to_tensor(data_y)return {"flow_x": data_x, "flow_y": data_y}# 这一步就是划分数据的重点部分@staticmethoddef slice_data(data, time_step, index, train_mode):if train_mode == "train":start_index = indexend_index = index + time_stepelif train_mode == "test":start_index = index - time_stepend_index = indexelse:raise ValueError("train mode error")data_x = data[start_index: end_index, :]data_y = data[end_index]return data_x, data_y# 数据与处理@staticmethoddef pre_process_data(data, ):# data N T Dnorm_base = LoadData.normalized_base(data)normalized_data = LoadData.normalized_data(data, norm_base[0], norm_base[1])return norm_base, normalized_data# 生成原始数据中最大值与最小值@staticmethoddef normalized_base(data):max_data = np.max(data, keepdims=True) #keepdims保持维度不变min_data = np.min(data, keepdims=True)# max_data.shape --->(1, 1)return max_data, min_data# 对数据进行标准化@staticmethoddef normalized_data(data, max_data, min_data):data_base = max_data - min_datanormalized_data = (data - min_data) / data_basereturn normalized_data@staticmethod# 反标准化 在评价指标误差以及画图的使用使用def recoverd_data(data, max_data, min_data):data_base = max_data - min_datarecoverd_data = data * data_base - min_datareturn recoverd_data@staticmethoddef to_tensor(data):return torch.tensor(data, dtype=torch.float)

生成训练数据

# 使用前25天作为训练集,后5天作为预测集

divide_days = [25, 5]

time_step = 5 #时间步长

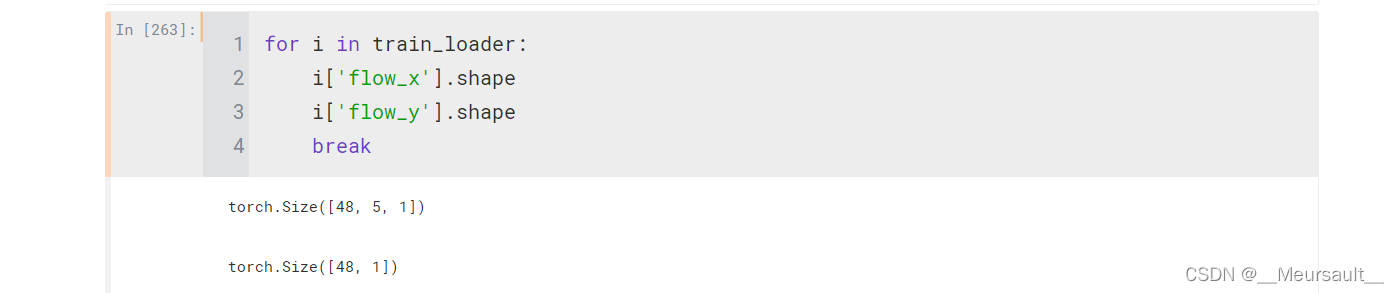

batch_size = 48

train_data = LoadData(data, time_step, divide_days, "train")

test_data = LoadData(data, time_step, divide_days, "test")

train_loader = DataLoader(train_data, batch_size=batch_size, shuffle=True, )

test_loader = DataLoader(test_data,batch_size=batch_size, shuffle=False, )

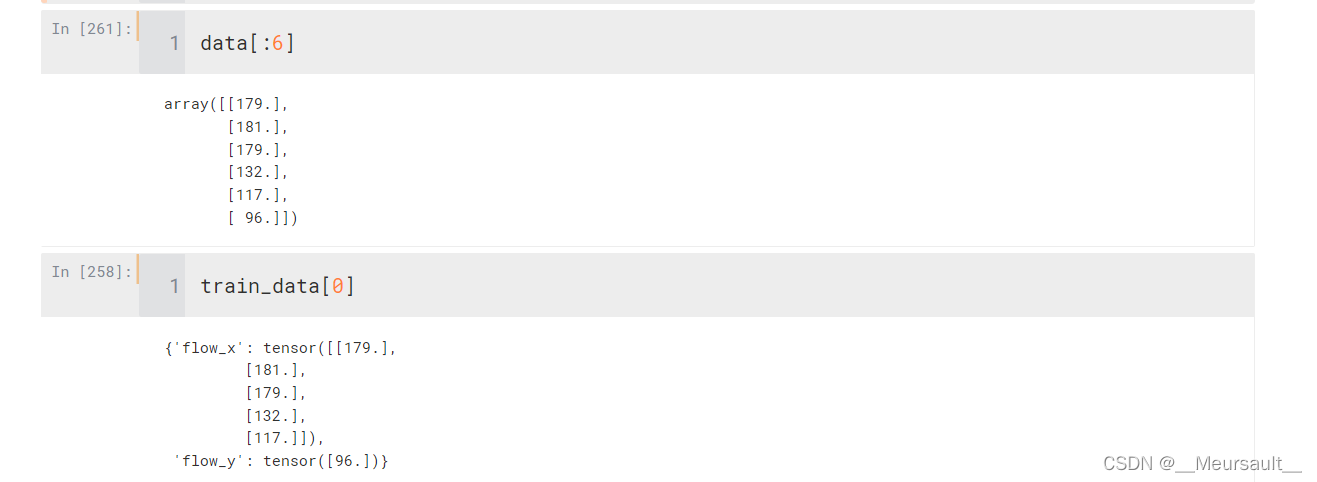

在test数据中,不使用shuffle随机化,可以使用train_data[0]看一下,返回出来第一个时间步的数据,以及时间步+1的值,但这是已经归一化后的数据,在init函数中可以不使用pre_process_data函数,这样来检测一下数据是否正确

不使用归一化第一个时间步的数据

需要说一下,DataLoader是一个item的数据类型,需要循环取出

构造LSTM模型

pytorch比较灵活,可以清楚的看到模型内部的运算过程,使用pytorch构建模型,一般包括两部分 : init 和 forward

init 定义需要使用的模型结构

forward进行计算

# LSTM构建网络

class LSTM(nn.Module):def __init__(self, input_num, hid_num, layers_num, out_num, batch_first=True):super().__init__()self.l1 = nn.LSTM(input_size = input_num,hidden_size = hid_num,num_layers = layers_num,batch_first = batch_first)self.out = nn.Linear(hid_num, out_num)def forward(self, data):flow_x = data['flow_x'] # B * T * Dl_out, (h_n, c_n) = self.l1(flow_x, None) # None表示第一次 hidden_state是0print(l_out[:, -1, :].shape)out = self.out(l_out[:, -1, :])return out

pytorch中的LSTM与TensorFlow不同的是,pytorch中的LSTM可以一次定义多个层,不需要一直叠加LSTM层,而且每次LSTM返回三个部分的值:所有层的输出(l_out)、隐藏状态(l_h)和细胞状态(c_n)。

l_out是集合了每个变量的l_h输出, 所以return的时候,可以在l_c中切片取最后一个值,当然也可以直接使用l_h

pytorch也可以使用Sequential,如果要使用Seqential就需要修改上面的Dataset,因为Dataset定义的使用返回是一个字典{“flow_x”, “flow_y”},如果使用Sequential传入模型的只能是"flow_x"。或者像tensorflow一样,不使用Dataset构建数据,直接通过一系列的方法进行划分(和上一篇文章一样)。

定义模型和损失函数

input_num = 1 # 输入的特征维度

hid_num = 50 # 隐藏层个数

layers_num = 3 # LSTM层个数

# out_num = 1

lstm = LSTM(input_num, hid_num, layers_num, out_num)

loss_func = nn.MSELoss()

optimizer = torch.optim.Adam(lstm.parameters())

模型预测

pytorch中不像tensorflow那么容易描述损失值,所以输出都需要自己来进行表示,当然也可以使用Tensorboard来查看损失值变化,但对新手而言比较麻烦

lstm.train()

epoch_loss_change = []

for epoch in range(30):epoch_loss = 0.0start_time = time.time()for data_ in train_loader:lstm.zero_grad()predict = lstm(data_)loss = loss_func(predict, data_['flow_y'])epoch_loss += loss.item()loss.backward()optimizer.step()epoch_loss_change.append(1000 * epoch_loss / len(train_data))end_time = time.time()print("Epoch: {:04d}, Loss: {:02.4f}, Time: {:02.2f} mins".format(epoch, 1000 * epoch_loss / len(train_data),(end_time-start_time)/60))

plt.plot(epoch_loss_change)

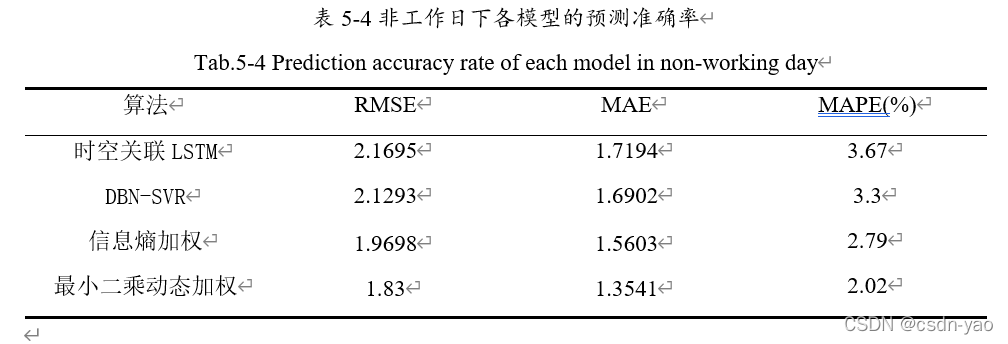

模型评价

lstm.eval()

with torch.no_grad(): # 关闭梯度total_loss = 0.0pre_flow = np.zeros([batch_size, 1]) # [B, D],T=1 # 目标数据的维度,用0填充real_flow = np.zeros_like(pre_flow)for data_ in test_loader:pre_value = lstm(data_)loss = loss_func(pre_value, data_['flow_y'])total_loss += loss.item()# 反归一化pre_value = LoadData.recoverd_data(pre_value.detach().numpy(),test_data.flow_norm[0].squeeze(1), # max_datatest_data.flow_norm[1].squeeze(1), # min_data)target_value = LoadData.recoverd_data(data_['flow_y'].detach().numpy(),test_data.flow_norm[0].squeeze(1), test_data.flow_norm[1].squeeze(1),)pre_flow = np.concatenate([pre_flow, pre_value])real_flow = np.concatenate([real_flow, target_value])pre_flow = pre_flow[batch_size: ]real_flow = real_flow[batch_size: ]

# # 计算误差

mse = mean_squared_error(pre_flow, real_flow)

rmse = math.sqrt(mean_squared_error(pre_flow, real_flow))

mae = mean_absolute_error(pre_flow, real_flow)

print('均方误差---', mse)

print('均方根误差---', rmse)

print('平均绝对误差--', mae)# 画出预测结果图

font_set = FontProperties(fname=r"C:\Windows\Fonts\simsun.ttc", size=15) # 中文字体使用宋体,15号

plt.figure(figsize=(15,10))

plt.plot(real_flow, label='Real_Flow', color='r', )

plt.plot(pre_flow, label='Pre_Flow')

plt.xlabel('测试序列', fontproperties=font_set)

plt.ylabel('交通流量/辆', fontproperties=font_set)

plt.legend()

# 预测储存图片

# plt.savefig('...\Desktop\123.jpg')完整代码

import pandas as pd

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.data as Data

from torchsummary import summary

import math

from torch.utils.data import DataLoader

from torch.utils.data import Dataset

import time

import matplotlib.pyplot as plt

from sklearn.metrics import mean_squared_error, mean_absolute_error

import math

from matplotlib.font_manager import FontProperties # 画图时可以使用中文# 加载数据

f = pd.read_csv('..\Desktop\AE86.csv')

# 从新设置列标

def set_columns():columns = []for i in f.loc[2]:columns.append(i.strip())return columns

f.columns = set_columns()

f.drop([0,1,2], inplace = True)

# 读取数据

data = f['Total Carriageway Flow'].astype(np.float64).values[:, np.newaxis]class LoadData(Dataset):def __init__(self, data, time_step, divide_days, train_mode):self.train_mode = train_modeself.time_step = time_stepself.train_days = divide_days[0]self.test_days = divide_days[1]self.one_day_length = int(24 * 4)# flow_norm (max_data. min_data)self.flow_norm, self.flow_data = LoadData.pre_process_data(data)# 不进行标准化# self.flow_data = datadef __len__(self, ):if self.train_mode == "train":return self.train_days * self.one_day_length - self.time_stepelif self.train_mode == "test":return self.test_days * self.one_day_lengthelse:raise ValueError(" train mode error")def __getitem__(self, index):if self.train_mode == "train":index = indexelif self.train_mode == "test":index += self.train_days * self.one_day_lengthelse:raise ValueError(' train mode error')data_x, data_y = LoadData.slice_data(self.flow_data, self.time_step, index,self.train_mode)data_x = LoadData.to_tensor(data_x)data_y = LoadData.to_tensor(data_y)return {"flow_x": data_x, "flow_y": data_y}# 这一步就是划分数据@staticmethoddef slice_data(data, time_step, index, train_mode):if train_mode == "train":start_index = indexend_index = index + time_stepelif train_mode == "test":start_index = index - time_stepend_index = indexelse:raise ValueError("train mode error")data_x = data[start_index: end_index, :]data_y = data[end_index]return data_x, data_y# 数据与处理@staticmethoddef pre_process_data(data, ):norm_base = LoadData.normalized_base(data)normalized_data = LoadData.normalized_data(data, norm_base[0], norm_base[1])return norm_base, normalized_data# 生成原始数据中最大值与最小值@staticmethoddef normalized_base(data):max_data = np.max(data, keepdims=True) #keepdims保持维度不变min_data = np.min(data, keepdims=True)# max_data.shape --->(1, 1)return max_data, min_data# 对数据进行标准化@staticmethoddef normalized_data(data, max_data, min_data):data_base = max_data - min_datanormalized_data = (data - min_data) / data_basereturn normalized_data@staticmethod# 反标准化 在评价指标误差以及画图的使用使用def recoverd_data(data, max_data, min_data):data_base = max_data - min_datarecoverd_data = data * data_base - min_datareturn recoverd_data@staticmethoddef to_tensor(data):return torch.tensor(data, dtype=torch.float)# 划分数据

divide_days = [25, 5]

time_step = 5

batch_size = 48

train_data = LoadData(data, time_step, divide_days, "train")

test_data = LoadData(data, time_step, divide_days, "test")

train_loader = DataLoader(train_data, batch_size=batch_size, shuffle=True, )

test_loader = DataLoader(test_data,batch_size=batch_size, shuffle=False, )# LSTM构建网络

class LSTM(nn.Module):def __init__(self, input_num, hid_num, layers_num, out_num, batch_first=True):super().__init__()self.l1 = nn.LSTM(input_size = input_num,hidden_size = hid_num,num_layers = layers_num,batch_first = batch_first)self.out = nn.Linear(hid_num, out_num)def forward(self, data):flow_x = data['flow_x'] # B * T * Dl_out, (h_n, c_n) = self.l1(flow_x, None) # None表示第一次 hidden_state是0

# print(l_out[:, -1, :].shape)out = self.out(l_out[:, -1, :])return out# 定义模型参数

input_num = 1 # 输入的特征维度

hid_num = 50 # 隐藏层个数

layers_num = 3 # LSTM层个数

out_num = 1

lstm = LSTM(input_num, hid_num, layers_num, out_num)

loss_func = nn.MSELoss()

optimizer = torch.optim.Adam(lstm.parameters())# 训练模型

lstm.train()

epoch_loss_change = []

for epoch in range(30):epoch_loss = 0.0start_time = time.time()for data_ in train_loader:lstm.zero_grad()predict = lstm(data_)loss = loss_func(predict, data_['flow_y'])epoch_loss += loss.item()loss.backward()optimizer.step()epoch_loss_change.append(1000 * epoch_loss / len(train_data))end_time = time.time()print("Epoch: {:04d}, Loss: {:02.4f}, Time: {:02.2f} mins".format(epoch, 1000 * epoch_loss / len(train_data),(end_time-start_time)/60))

plt.plot(epoch_loss_change)#评价模型

lstm.eval()

with torch.no_grad(): # 关闭梯度total_loss = 0.0pre_flow = np.zeros([batch_size, 1]) # [B, D],T=1 # 目标数据的维度,用0填充real_flow = np.zeros_like(pre_flow)for data_ in test_loader:pre_value = lstm(data_)loss = loss_func(pre_value, data_['flow_y'])total_loss += loss.item()# 反归一化pre_value = LoadData.recoverd_data(pre_value.detach().numpy(),test_data.flow_norm[0].squeeze(1), # max_datatest_data.flow_norm[1].squeeze(1), # min_data)target_value = LoadData.recoverd_data(data_['flow_y'].detach().numpy(),test_data.flow_norm[0].squeeze(1), test_data.flow_norm[1].squeeze(1),)pre_flow = np.concatenate([pre_flow, pre_value])real_flow = np.concatenate([real_flow, target_value])pre_flow = pre_flow[batch_size: ]real_flow = real_flow[batch_size: ]

# # 计算误差

mse = mean_squared_error(pre_flow, real_flow)

rmse = math.sqrt(mean_squared_error(pre_flow, real_flow))

mae = mean_absolute_error(pre_flow, real_flow)

print('均方误差---', mse)

print('均方根误差---', rmse)

print('平均绝对误差--', mae)# 画出预测结果图

font_set = FontProperties(fname=r"C:\Windows\Fonts\simsun.ttc", size=15) # 中文字体使用宋体,15号

plt.figure(figsize=(15,10))

plt.plot(real_flow, label='Real_Flow', color='r', )

plt.plot(pre_flow, label='Pre_Flow')

plt.xlabel('测试序列', fontproperties=font_set)

plt.ylabel('交通流量/辆', fontproperties=font_set)

plt.legend()

# 预测储存图片

# plt.savefig('...\Desktop\123.jpg')``